Chapter 6: Adding Images to Your Site

Revised June 2003

|

Chapter 6: Adding Images to Your SiteRevised June 2003 |

The book by Richard Ford, about a long uneventful weekend in the life of Frank Bascombe, a divorced real estate salesman in Haddam, New Jersey, won the Pulitzer Prize for Literature:

"Unmarried men in their forties, if we don't subside entirely into the landscape, often lose important credibility and can even attract unwholesome attention in a small, conservative community. And in Haddam, in my new circumstances, I felt I was perhaps becoming the personage I least wanted to be and, in the years since my divorce, had feared being: the suspicious bachelor, the man whose life has no mystery, the graying, slightly jowly, slightly too tanned and trim middle-ager, driving around town in a cheesy '58 Chevy ragtop polished to a squeak, always alone on balmy summer nights, wearing a faded yellow polo shirt and green suntans, elbow over the window top, listening to progressive jazz, while smiling and pretending to have everything under control, when in fact there was nothing to control."The script of Independence Day, the movie, in which aliens blow up the White House, wasn't quite as well-crafted. Yet more people went to see the movie than read the book.

People like pictures.

People like movies even better than pictures but, for most publishers, they are unrealistically expensive to produce and, for most users even in 2003, they are unrealistically slow to download.

This chapter documents a method for building an image library and presenting it to each Web user in the best way for that particular person.

Computer monitors don't have the resolution of photographic prints but

they can display a wide range of tones. If you take care with your

images, you may be able to present the user with the jewel-like

experience of viewing a photographic slide.

Computer monitors don't have the resolution of photographic prints but

they can display a wide range of tones. If you take care with your

images, you may be able to present the user with the jewel-like

experience of viewing a photographic slide.

High-quality black-and-white photographic prints have a contrast range of about 100 to 1. That is, the most reflective portion of a print (whitest highlight) reflects 100 times more light than the darkest portion (blackest shadow). The ideal surface for reflecting a lot of light would be extremely glossy and smooth. The ideal surface for reflecting very little light would be something like felt.

It is not currently possible to make one sheet of paper that has amazingly reflective areas and amazingly absorptive areas. Magazine pages are generally much worse than photographic paper in this regard. Highlights are not very white and shadows are not very black, resulting in a contrast ratio of about 20 to 1.

Slides have several times the contrast range of a print. That's because they work by blocking the transmission of light. If the slide is very clear, the light source comes through almost undimmed. In portions of the slide that are nearly black, the light source is obscured. Photographers always suffer heartbreak when they print their slides onto paper because it seems that so much shadow and highlight detail is lost. Ansel Adams devoted his whole career to refining the Zone System, a careful way of mapping the brightness range in a natural scene (as much as 10,000 to 1) into the 100 to 1 brightness range available in photographic paper.

Computer monitors are closer to slides than prints in their ability to represent contrast. Shadows correspond to portions of the monitor where the phosphors are turned off. Consequently, any light that comes from these areas is just room light reflected off the glass face of the monitor. You would think that the contrast ratio from a computer monitor would be 256 to 1 since display cards present 256 levels of intensity to the software. However, monitors don't respond linearly to increased voltage. Contrast ratios between 100 to 1 and 170 to 1 are therefore more typical.

Most Web publishers never take advantage of the monitor's ability to

represent a wide range of tones. Rather than starting with the original

slide or negative, they slap a photo down on a flatbed scanner. A

photographic negative can only hold a tiny portion of the tonal range

present in the original scene. A proof print from that negative has even

less tonal range. So it is unsurprising that the digital image resulting

from this scanned print is flat and uninspiring.

Obtaining a high-quality scan isn't so difficult. You must go back to whatever was in the camera, that is, the original negative or slide. You should not scan from a dupe slide. You should not scan from a print. The scan should be made in a dust-free room or you will spend the rest of your life retouching in PhotoShop. Though resolution is the most often mentioned scanner specification, it is not nearly as important as the maximum density that can be scanned. You want a scanner that can see deep into shadows, especially with slides.

Never going back to the original slide or negative again means never. If a print magazine requests a high-resolution image to promote your site, you should be able to deliver a 2,000x3,000 pixel scan without having to dig up the slide again. If there is a fire at your facility and all of your slides burn up, you should have a digital backup stored elsewhere that is sufficient for all your future needs.

Quick retrieval means that if a user says, "I like the picture of the dog holding the rope in SQL for Web Nerds (http://philip.greenspun.com/sql/)," it is easy to find the high-resolution digital file.

Kodak PhotoCD is a scanning standard that kills three birds with one,

er, CD. Every image on a CD is available in five resolutions, the

highest of which is 2,000x3,000. Kodak will tell you that you can make

photographic-quality prints up to 20x30 inches in size from the

scans. Scans are made from original negatives or slides in

dust-controlled environments inside photo labs. The newest generation

(1996) of PhotoCD scanners can read reasonably deeply into slide

film shadows. If you want more shadow detail and an additional

4,000x6,000 scan, you can get a Pro PhotoCD made.

Kodak PhotoCD is a scanning standard that kills three birds with one,

er, CD. Every image on a CD is available in five resolutions, the

highest of which is 2,000x3,000. Kodak will tell you that you can make

photographic-quality prints up to 20x30 inches in size from the

scans. Scans are made from original negatives or slides in

dust-controlled environments inside photo labs. The newest generation

(1996) of PhotoCD scanners can read reasonably deeply into slide

film shadows. If you want more shadow detail and an additional

4,000x6,000 scan, you can get a Pro PhotoCD made.

The full PhotoCD scans are in a proprietary format that browsers don't understand but tools such as Adobe PhotoShop do. Each .pcd file is about 5 MB in size and is therefore reasonably quick for a print publisher to download.

Quickly locating an image on PhotoCD is facilitated by Kodak's provision of index prints. You can work through a few hundred images per minute by looking over the index prints attached to each CD-ROM.

What does all of this cost? About $1 to $3 for a standard scan from a 35mm original; about $15 for a Pro scan from a medium format or 4x5-inch original. When you consider that you are getting 500MB of media with each PhotoCD, the scanning per se is free (in other words, it is cheaper to have a PhotoCD made than to buy a hard disk capable of storing that much data).

See http://www.photo.net/sources/labs for a few suggestions of good PhotoCD vendors.

Suppose that a publisher has a high-resolution digital image in a large

uncompressed file. How to make this image available to Web users?

What most commercial publishers do is manually produce a single JPEG

that will fit nicely on the same page as the text, on a small monitor.

This is usually about 180x250 pixels in size. The user gets a little

graphical relief from plain text but can't extract much information from

the photo unless it is a very simple image.

At right: an example of an unlinked, and hence frustrating, thumbnail.

It is a ruin in Canyon de Chelly and users who want to see

construction details of this Anasazi dwelling won't get any help from

this image.

A slightly more sophisticated approach is to produce two JPEGs. Place

the smaller one in-line on the page and make it a hyperlink to the

larger JPEG. This helps the curious user but note that there is no

effective way to caption the image and the user only gets to choose

between two sizes unless you uglify your pages by adding a second

hyperlink to a "huge" JPEG.

A slightly more sophisticated approach is to produce two JPEGs. Place

the smaller one in-line on the page and make it a hyperlink to the

larger JPEG. This helps the curious user but note that there is no

effective way to caption the image and the user only gets to choose

between two sizes unless you uglify your pages by adding a second

hyperlink to a "huge" JPEG.

At right: a thumbnail linked to a larger, static JPEG. Note that

there is no convenient way to associate a caption with either image and

certainly the user won't get any context once he has requested the

larger JPEG. Note: this is, of course, the co-author Alex at the age

of three months, from http://philip.greenspun.com/dogs/alex

Think about the user interface of the "small linked to large" system from the user's perspective. The publisher shows you a small picture. If you want a bigger view, you click on it. This is fundamentally the correct user interface, but it is a shame that clicking on an image doesn't also result in the display of a caption, of technical details behind the photograph, of options for yet larger sizes.

What readers really want is for a thumbnail to be a link to an HTML page showing the caption, technical details, a large JPEG, and links to still-larger JPEGs or perhaps print-quality versions. This will involve a lot of work unless the publisher has automated production from initial high-res scan through to the Web directories (more on that below), but the users get the best possible interface... or do they?

What should a server infer from the user's action in clicking on a

thumbnail? That he wants to see a 512x768 pixel JPEG? What if he has a

really big monitor? A really small monitor? A really slow Internet

connection? A really fast Internet connection? What if he has

previously told me what he wants?

Most of the thumbnails on my site are now linked to computer programs. Since I run AOLserver, these are written in the AOLserver Tcl API but they could just as easily be Perl CGI scripts or Microsoft Active Server Pages. The programs first check the Magic Cookie headers on requests for larger images. If the user has previously said "I'm a nerd with a 1600x1200 pixel monitor and a fast connection, please always give me 1000x1500 pixel JPEGs by default" then the program sees the cookie and generates an HTML page with a large JPEG on it, plus the caption and tech details (if available). If there is no cookie, the program has a reasonable default (in 2003, it is to serve an HTML page with a 512x768 pixel JPEG, captions, and links) plus a "click here to personalize this site" option. Try it out with the image at right.

Image base/16 base/4 base base*4 base*16 Resolution 128x192 256x384 512x768 1024x1536 2kx3k Amount 100 100 100 100 100 Radius 0.25 0.5 1.0 2.0 4.0 Threshold 2 2 2 2 2

Be prepared to undo and change the settings if the result isn't what you want. Unsharp Mask finds edges between areas of color and increases the contrast along the edge. The Amount setting controls the strength of the filter. I usually stick to numbers between 60 percent and 140 percent. Radius controls how many pixels in from the color edge are affected (so you need higher numbers for bigger images). Threshold controls how different the colors on opposing sides of an edge have to be before the filter goes to work. You need a higher threshold for noisy images or ones with subtle color shifts that you want left unsharpened.

One of the rationales behind this approach is that you never sharpen twice and that sharpening is always the very last image processing step. That's why an intermediate PhotoShop native format file is necessary.

Here's the format for the description file:

and an example from one of the disks behind http://www.photo.net/samantha/:line 1: spec for which resolutions to convert line 2: on which resolution images to write copyright notice | notice line 3: apply sharpening? (scanning blurs images) line 4: add black borders (nice for negatives) line 5: URL stub (where on the server will this image reside?) lines 6-N: one line/image, formatted as the example below <number on disk>|<target file name>|<which_resolutions>|<caption>|<tech details>|<alt>|<tutorial info>

The first line says "By default, for each image, convert the first, third, and fourth resolutions from the PhotoCD file." The second line says, "On the third, fourth, fifth, and sixth resolutions, add 'copyright 1993 philg@mit.edu' to the bottom right corner of the JPEG." The next few lines specify that all images from this disk will be sharpened and surrouded by black borders. The files will reside in the101100 001111|copyright 1993 philg@mit.edu sharpen:yes borders:yes url_stub:/images/pcd1765/ 2|bearfight||Brooks Falls, Katmai National Park|Nikon 8008, 300/2.8 AF lens (used in MF mode), FOBA ballhead, tripod, Kodak Ektar 25 film 3|montreal-flower||In Montreal's Botanical Gardens|Nikon 8008, 60/2.8 macro lens, Fuji Velvia 4|montreal-olympic-stadium||Montreal's Olympic Stadium|Nikon 8008, 20/2.8?, Fuji Velvia

/images/pcd1765/ directory from my server

root.

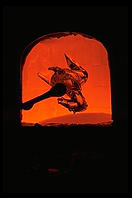

The first image marked for conversion is actually number 2 on the disk

(at right). The JPEG file is specified to contain the word "bearfight".

The next field is empty ("||") because this image should be converted to

the default resolutions (1,3, and 4). The caption comes next and,

finally, some technical information behind the photo (most publishers

wouldn't care about this but remember that photo.net is visited by a lot

of photographers who are improving their craft).

The first image marked for conversion is actually number 2 on the disk

(at right). The JPEG file is specified to contain the word "bearfight".

The next field is empty ("||") because this image should be converted to

the default resolutions (1,3, and 4). The caption comes next and,

finally, some technical information behind the photo (most publishers

wouldn't care about this but remember that photo.net is visited by a lot

of photographers who are improving their craft).

What does a Web page author get for all of this? Most valuably, /images/pcd1765/index-fpx. Here's a fragment of the HTML from that undifferentiated list of all the photos on the disk:

<a href="/images/pcd1765/bearfight-2.tcl">

<img HEIGHT=134 WIDTH=196 src="/images/pcd1765/bearfight-2.1.jpg"

ALT="Brooks Falls, Katmai National Park">

</a>

There are a couple of things to note about the .tcl script to which the thumbnail points. First, it runs inside the AOLserver process rather than as a separately forked CGI script. This is at least a factor of 10 more efficient than starting up a Perl script or whatever. Since the script executes only when a user clicks on a thumbnail, the efficiency probably doesn't matter unless you have an unusually popular site on an unusually wimpy server. Still, it is nice to conserve a server's power for image processing and running a relational database management system.

The second point is about software engineering and is hard to illustrate without showing the source code for the Tcl script:

set the_whole_page \

[philg_img_target $conn \

"/images/pcd1765/" \

"IMG0002.fpx" \

"bearfight-2" \

"784" "536" \

"copyright 1993 philg@mit.edu" \

"Brooks Falls, Katmai National Park" \

"Nikon 8008, 300/2.8 AF lens (used in MF mode),

FOBA ballhead, tripod, Kodak Ektar 25 film" \

"" ]

ns_return 200 text/html $the_whole_page

philg_img_target, defined server-wide. Suppose Web

standards evolve so that there is a way for the server to tell a client

to what color space standards an image was calibrated. By changing this

single procedure, one could make sure that all of the thousands of

PCD-derived images on photo.net are sent out with a "I was scanned in

the Kodak PhotoCD color space" tag. The photos will then be rendered on

users' monitors with the correct tones (right now they come out way too

dark on Linux, a bit dark on Windows, and a bit too light on the average

Macintosh). All of the personalization code that sets and reads magic

cookies is also encapsulated in philg_img_target (the

source code for which is available at http://philip.greenspun.com/panda/philg_img_target.txt).

How does this all happen? The image conversions from PhotoCD to JPEG are done with ImageMagick. You can find precompiled binaries for most Unix variants and also Windows 95/NT at http://www.imagemagick.org. Here's what a typical ImageMagick command (to make a thumbnail JPEG) looks like:

Note that this is all a single shell command. There are a bunch of optional image processing flags before the "from-file name." To avoid the production of progressive JPEGs (see below), the default from ImageMagick, the command invokes "-interlace NONE". Any kind of scanning introduces some fuzziness into the image so it is best to ask ImageMagick to sharpen a bit with "-sharpen 50". For a two-pixel border all around, there is "border 2x2". One could choose the color but the default will be black. Finally, ImageMagick lets you add a comment to the JPEG file. Anyone who opens the JPEG with a standard text editor like Emacs will be able to see that it is "copyright Philip Greenspun" (conceivably other programs might display this information, but PhotoShop doesn't seem to, on either the Macintosh or Windows). See http://www.imagemagick.org/www/convert.html for a complete list of options.convert -interlace NONE -sharpen 50 -border 2x2 \ -comment 'copyright Philip Greenspun' \ '/cdrom/PHOTO_CD/IMAGES/IMG0013.PCD;1[1]' bear-salmon-13.1.jpg

The from-file name, "/cdrom/PHOTO_CD/IMAGES/IMG0013.PCD;1[1]" is mostly dictated by the directory structure on a PhotoCD. However, the final "[1]" tells ImageMagick to grab the 128x192 pixel thumbnail out of the Image Pac ("Base/16" size in Kodak parlance). The to-file name, "bear-salmon-13.1.jpg" includes the image number (13) from the original PhotoCD to make it easier for a human to find the entire Image Pac if requested.

ImageMagick is convenient but typing 300 commands like the above for each PhotoCD would get tedious. An girlfriend of the authors' took pity and used what she had learned as an MIT undergraduate to write a Perl script to automate the process: http://philip.greenspun.com/panda/pcd-to-jpg-and-fpx.txt.

Of course, no romance is one sunny Perl-filled day after another. When she delivered the code, I complained about the lack of data abstraction and told her that she needed to reread Structure and Interpretation of Computer Programs (Abelson and Sussman; MIT Press 1997), the textbook for freshman computer science at MIT. I also asked her to rename helper procedures that returned Boolean values with "_p" ("predicate") suffixes. She replied "People will laugh at you for being an old Lisp programmer clinging pathetically to the 1960s" and then dumped me.

Thus with a minimum of mechanical labor, we managed to get thousands of images on-line, in three sizes of JPEG. What was our reward? Hundreds of e-mail messages from people asking where they could find a picture of a Golden Retriever or a waterfall. Once again MIT friends and Perl came to the rescue. Jin Choi spent 15 minutes writing a Perl script to grab up all the captions from the flat files into one big list. We then stuffed the list into a relational database table:

create table philg_photo_cds (

photocd_id varchar(20) not null primary key,

-- bit vectors done with ASCII 0 and 1; probably should convert this to

-- Oracle 8 abstract data type

jpeg_resolutions char(6),

-- on which resolutions to write copyright label

copyright_resolutions char(6),

copyright_label varchar(100),

add_borders_p char(1) check (add_borders_p in ('t','f')),

sharpen_p char(1) check (sharpen_p in ('t','f')),

-- how this will be published

url_stub varchar(100) -- e.g., 'pcd3735/'

);

create table philg_photos (

photo_id integer not null primary key,

photocd_id varchar(20) not null references philg_photo_cds,

cd_image_number integer, -- will be null unless photocd_id is set

filename_stub varchar(100), -- we may append frame number or cd image number

caption varchar(4000),

tech_details varchar(4000),

im_index char(1) default 'a' not null

);

-- create Oracle Text index

begin

ctx_ddl.create_preference('stock_user_datastore', 'user_datastore');

ctx_ddl.set_attribute('stock_user_datastore', 'procedure', 'stock_user_datastore_proc');

end;

create index stock_ctx_index on philg_photos (im_index)

indextype is ctxsys.context parameters ('datastore stock_user_datastore memory 250M');

Is this a great system or what? Let's consider what happens if there is

a mistake in spelling a word in a filename. To correct the mistake, one

must change the description.text file. Then one must

either rerun the conversion script over the entire PhotoCD or manually

change the filenames of three JPEGs (plus all the HTML documents that

reference the thumbnail). Then one must rename the .tcl script that

corresponds to the image and also update a row in the relational

database table that sits behind the stock photo index system.

Perhaps it would be better to keep the image information in just one place: the relational database (RDBMS). Then we can drive the image conversion and .tcl file production from there. If we were willing to make the serving of plain old images dependent on the RDBMS being up and running, then we could just have HTML files reference photos according to their ID in the database and dispense with the static .tcl files.

Once we're running this as a database service, wouldn't it make more sense to let other people use it? If many photographers are using the same RDBMS server, then a fifth grader doing a report on Paris could search for "Eiffel Tower" and find all the photographers who had images of that monument and were willing to share it. It could also be useful for professional photographers who wanted to collaborate around images with their clients.

Coincidentally, this is more or less what some students in MIT Course 6.171 did a few years ago. It is up and running at http://www.photo.net/gallery/ now.

If you're not going to go the full RDBMS-backed route, you need to think

about where to put JPEG files on your Web server. At first it seems

reasonable to put image files into the same directories as the .html

files where they are used. This can make it tough to find and tough to

cross-reference images.

If you're not going to go the full RDBMS-backed route, you need to think

about where to put JPEG files on your Web server. At first it seems

reasonable to put image files into the same directories as the .html

files where they are used. This can make it tough to find and tough to

cross-reference images.

Here's the system that we used on photo.net for many years:

<IMG SRC="/images/pcd1253/pink-lady-and-dogs-8.1.jpg">

<IMG SRC="/images/pcd1253/pink-lady-and-dogs-8-sm.jpg" WIDTH=192 HEIGHT=128>

Note that if the image isn't exactly 128x192, the browser will crudely resize it to fit, potentially resulting in a fuzzy or blocky picture. Publishers who don't use automated production techniques such as those described above oftentimes find that they are lacking the desired size of digital image. They think to themselves, "I know that I want it to be 200x300 pixels so I'll just put in WIDTH=300 HEIGHT=200 tags". Here's the result, an e-mail message from a friend and the author's response:

I have just been through [a part of his site], which was completed six

months ago. It is beginning to erode. The images are fading. Something

is wrong with the server, or somewhere down the pipe. I am very

worried that everything I'm doing has the possibility of fading. The

images look weakened, diluted, and on the Mac, they look shamefully

weak, like there's some complete idiot behind the site. None of us are

idiots, and I may have been very inexperienced at the beginning of

this web site, but I've been working with digital images since 1990

and I've never known of this happening before.

Something has to be done soon about this.

I notice in that you're using WIDTH and HEIGHT tags in Netscape to

rescale your images, e.g., on

http://.. [*** URL omitted to preserve the friendship ***]

do a "Page Info" in Netscape and you'll see that it is "455x288 (scaled

from 504x319)".

Unless you're using my batch-conversion script or Web/db application, it

is probably best to leave the WIDTH and HEIGHT blank. When you're all

done with the page, run WWWis, a Perl program that grinds over an .html

file, grabs all of the GIF and JPEG files to see how big they are, then

writes out a new copy of the .html file with WIDTH and HEIGHT attributes

for each IMG. The program is free and available from http://bloodyeck.com/wwwis/.

Other attributes potentially worth adding to your HTML tags are the following:

Some people like interlaced or "progressive" images, which load gradually. The theory behind these formats is that the user can at least look at a fuzzy full-size proxy for the image while all the bits are loading. In practice, the user is forced to look at a fuzzy full-size proxy for the image while all the bits are loading. Is it done? Well, it looks kind of fuzzy. Oh wait, the top of the image seems to be getting a little more detail. Maybe it is done now. It is still kind of fuzzy, though. Maybe the photographer wasn't using a tripod. Oh wait, it seems to be clearing up now ...

A standard GIF or JPEG is generally swept into its frame as the bits load. The user can ignore the whole image until it has loaded in its final form, then take a good close look. He never has to wonder whether more bits are yet to come.

The most serious problem with JPEG files is that they aren't stored in any kind of standard color space. So if you edit them to look good on a Macintosh monitor (gamma-corrected), they might look horrible on someone's Linux machine (typically not gamma-corrected). PhotoCD Image Pacs are always produced in a calibrated color space, thus preserving your investment in an image library against the day when the Web standards finally enable a publisher to specify a gamma along with an image.

If you're in a hurry to get a handful of images from slides or negatives onto the Web, you may wish to use a desktop film scanner. The most interesting recent practical innovations in desktop scanners are systems that look at a piece of film from different angles and try to figure out what is a dust particle or scratch and what is part of the camera-formed image. An example of this technology is "Digital ICE", incorporated in the Nikon Coolscans.

While a publisher may have a substantial library of images on film it is

safe to say that digital cameras are ubiquitous in the hands of readers.

Anywhere that users contribute text is an opportunity for you to let

them contribute pictures as well. Sadly you can't expect users to do

any resizing or thumbnail production so your server needs to be able to

invoke ImageMagick or a similar tool at upload time. Expect the user to

upload an enormous full-resolution image, e.g., 6 megapixels or

2000x3000 pixels. If you present this back unmodified to other readers

they won't be happy because (a) the image will be slow to load, and (b)

the image will be larger than their screen (unless resized by their

browser). You'll probably want to produce a thumbnail and an 800x1200

pixel version, discarding the original upload unless you believe that a

lot of your users will want to make prints.

While a publisher may have a substantial library of images on film it is

safe to say that digital cameras are ubiquitous in the hands of readers.

Anywhere that users contribute text is an opportunity for you to let

them contribute pictures as well. Sadly you can't expect users to do

any resizing or thumbnail production so your server needs to be able to

invoke ImageMagick or a similar tool at upload time. Expect the user to

upload an enormous full-resolution image, e.g., 6 megapixels or

2000x3000 pixels. If you present this back unmodified to other readers

they won't be happy because (a) the image will be slow to load, and (b)

the image will be larger than their screen (unless resized by their

browser). You'll probably want to produce a thumbnail and an 800x1200

pixel version, discarding the original upload unless you believe that a

lot of your users will want to make prints.

Do be careful in reviewing user-submitted photos. In the United States, it is illegal to possess child pornography and it is not a defense to say that you did not know that you posessed it. You want to make sure that the Federales don't seize your server because of something a user uploaded. [One implication of this law is that an effective way to get rid of annoying relatives is by visiting their house, slipping some child pornography behind the sofa, then tipping off the police to the perverts at 80 Elm Drive. Your in-laws will plead righteous ignorance of what was behind their sofa all the way to Club Fed.]

Here are two common uses of watermarking:

For my first couple of years of publishing on the Web, I got very huffy

about copyright infringement and entertained dark thoughts of litigation

when one of my photos, without credit or payment, appeared on a site or

in a print magazine. I didn't spend days behind a tripod waiting for

the perfect moment so that people could use my work to promote their

ugly commercial ventures.

For my first couple of years of publishing on the Web, I got very huffy

about copyright infringement and entertained dark thoughts of litigation

when one of my photos, without credit or payment, appeared on a site or

in a print magazine. I didn't spend days behind a tripod waiting for

the perfect moment so that people could use my work to promote their

ugly commercial ventures.

But realistically litigation in the United States isn't for middle class people. I couldn't afford to hire a team of lawyers to chase down the miscreants and I didn't want to spend my life filing lawsuits myself. I decided to focus my energy on creating new works and not lose sleep over piracy of the old ones.

Then one of my readers e-mailed me a Web page with an uncredited usage of one of a bear photograph from Travels with Samantha: ". . . Yes, the IRS does try to intimidate and bully you. And, they publicly crucify some public figure each year (Willie Nelson, Leona Helmsley, Darryl Strawberry, Pete Rose, etc.) who has been caught with allegedly "fudged" returns. But, as you will learn, there is NO LAW, anywhere, that mandates you file a tax return! . . ." For $88-1000, you could purchase assistance in "How To STOP Paying Federal Income Tax - LEGALLY!"

This wasn't exactly what I had in mind when I set up my site, so I sent the company some email requesting that they remove the picture. The response was frustrating but I was open-mouthed in admiration of its creativity:

Our programmer says that he has never seen your book, and that the picture came from a site that listed lots of pix (both gif & jpg) which were presented as available for download by anyone! At this point we are not interested in getting into a tussle with you, but the question is now open whether the picture is original with you or you took the picture from the same source. We'll need some coroberation to verify your claim to the picture before we go any farther. Dana Ewell, CEO !SOLUTIONS! Group

I still didn't have enough money to hire a team of lawyers to "put the genie back in the bottle" but why not use the following assets: (1) philip.greenspun.com is more interesting than average; (2) philip.greenspun.com is more stable and, because it is so old, better-indexed than average. Every time another publisher used one of my 10,000 on-line images with a hyperlinked credit, I might earn a new reader. Did their site suck? So much the better. The user wouldn't be likely to press the Back button once he or she arrived at my site through the "photo courtesy http://philip.greenspun.com" link. Of course, my site is non-commercial so extra readers don't translate into cash, but it is satisfying that my work becomes available to people who might not find it otherwise. That took care of the good-faith users. Could I use my site's stability and presence in Web indices to deal with the bad-faith users?

It hit me all at once. An on-line Hall of Shame. I'd send non-crediting users a single e-mail message. If they didn't mend their ways, I'd put their names in my Hall of Shame for their grandchildren to find. I figure that anyone reduced to stealing pictures is probably not creative enough to build a high Net Profile. So a search for their name won't result in too many documents, one of which will surely be my Hall of Shame. What if the infringer were to retaliate by putting up a page saying "Philip Greenspun beats his Samoyed"? Nobody would ever find it because a Google search for "Philip Greenspun" returns too many documents. Try it right now, then try "Shawn Bonnough" for comparison, making sure to include the string quotes in both queries.

A practical-minded person might argue that my system doesn't get me any cash or stop any infringement. A technology futurist might argue that one of the micropayment schemes from the 1960s is going to be set up Real Soon Now. Both of these people are right I guess, but I won't be sorry if what seems to be the evolving custom on the Internet solidifies into law: send e-mail asking for permission to republish, be scrupulous about crediting authors, and prepare to be vilified if you flout these rules.

Would that be the best possible system? Maybe not, but it has to be much more efficient for society than a bunch of corporations hiring lawyers to sling mud at each other in court. Under my system, we can enjoy seeing our work (with credit) on other folks' sites, vent our spleens at midnight by adding to a Web page of transgressors, and then move on to new productive activities.

Note that other folks seem to be independently settling on this philosophy. In 2002, for example, the Open Content initiative was launched to develop a license that one can use to give away content in exchange for credit when it is reused. See www.opencontent.org.

Here is what you might have learned in this chapter:

Here is what you might have learned in this chapter:

Some of the information (only the technical, not related to aspects of photography) can be updated. JPEG images now have the capability to use headers (digital cameras now embed all the technical information inside the headers themselves using the EXIF format). Also it is possible to put comments inside the images, so you dont have to maintain data about image seperately from the image. All you need to do is to put the information in the image once and then program your webserver to pull that information out as and when required (full scale searching based on comments, exposure, camera model...).Umm, hope someone benefits.

-- Sanjeev Dwivedi, May 7, 2003

The page mentions only GIF and JPEG formats. I'd suggest replacing GIF with PNG.

-- N Jacobs, July 17, 2003