Back in January, I attended an “AI and Coding” class:

There were a couple of videographers present so I’m hopeful that eventually the lectures will appear on YouTube as some previous events in this “Expanding Horizons in Computing” series have.

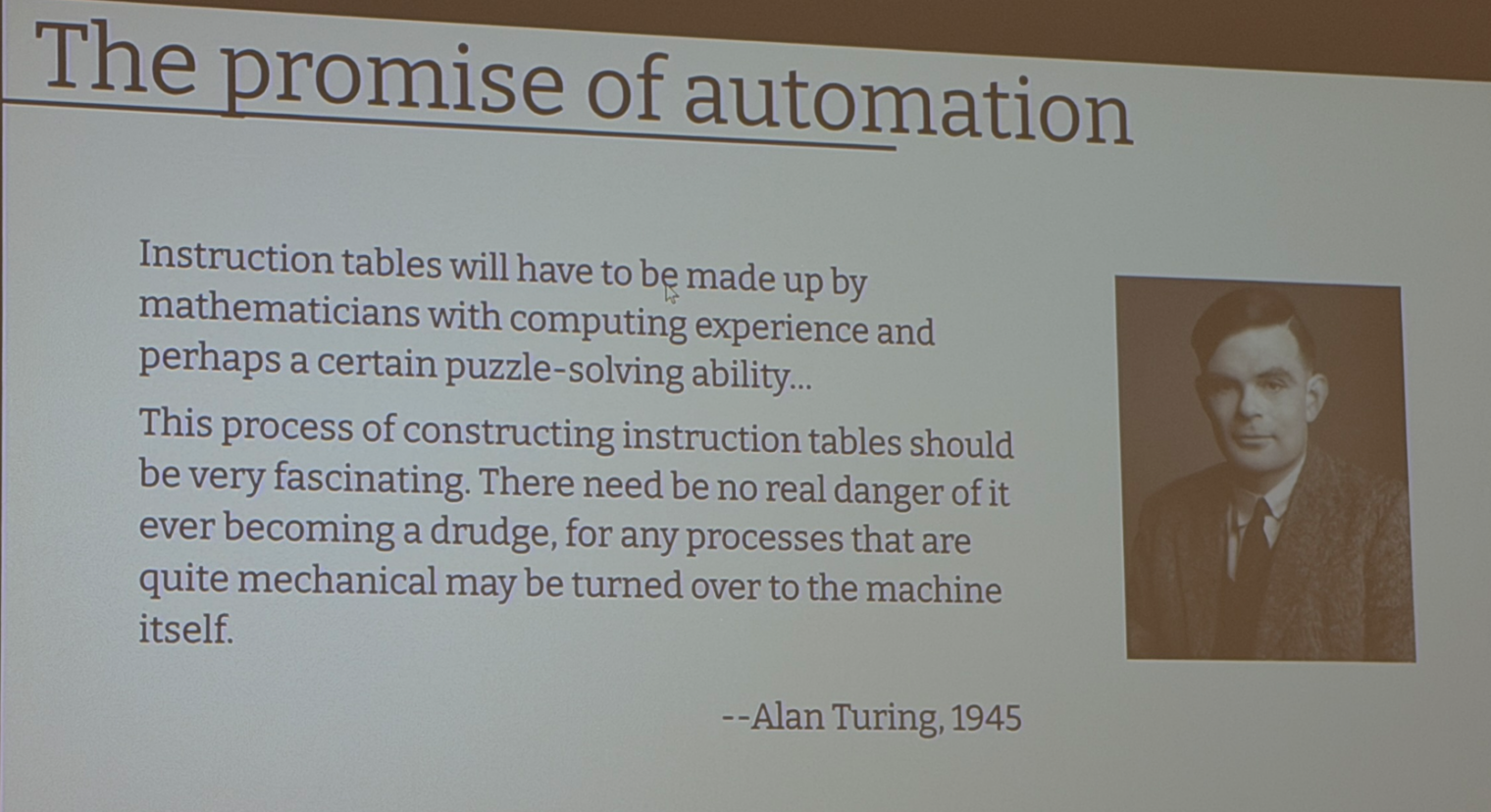

During the intro, we were reminded that the first thing computer nerds want to do is get rid of computer nerds:

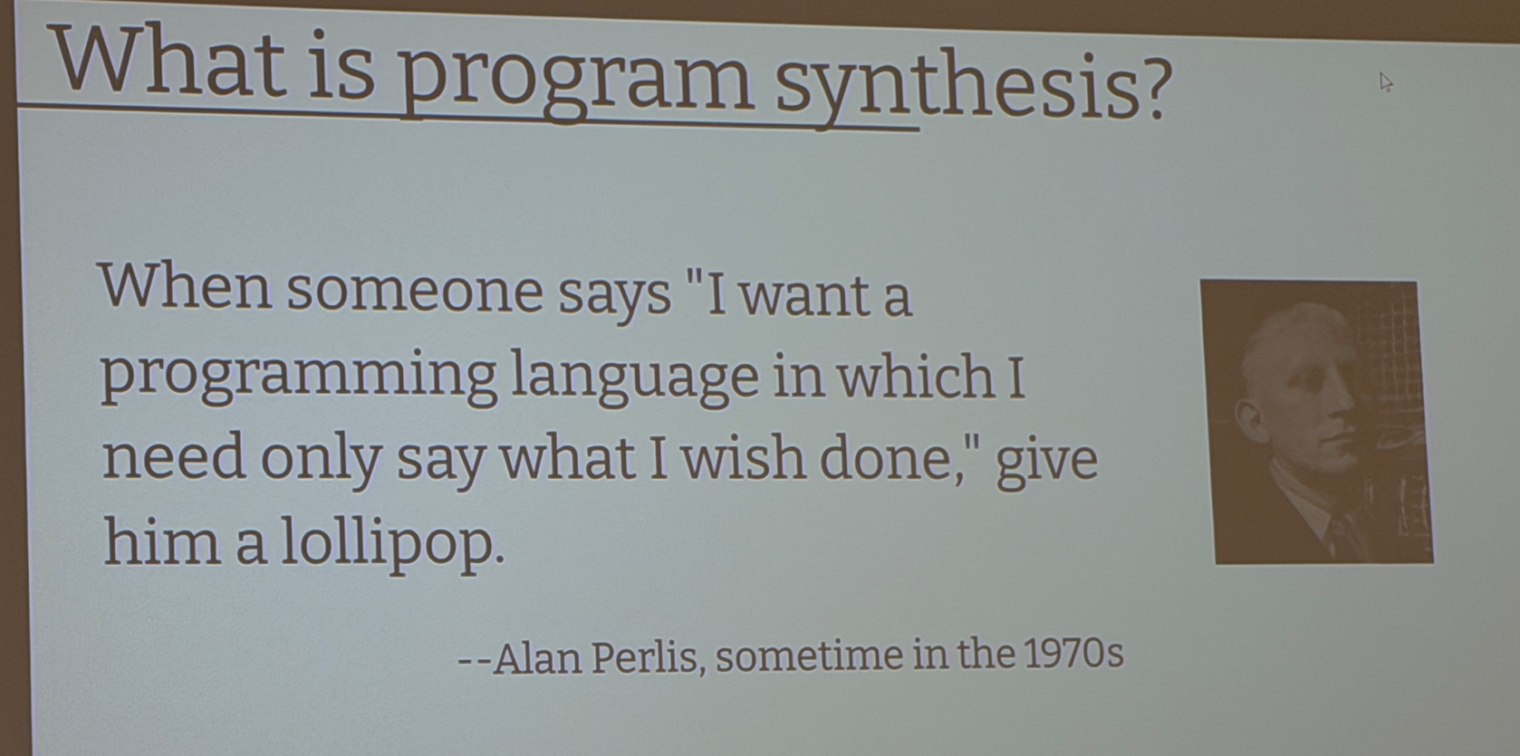

Inevitably, though, there have been haters. Alan Perlis:

Tim Kraska was the speaker who’d done the most to determine what LLMs can do. His grad students spent 2.5 months and about $100,000 in Claude API fees replicating the capabilities of the 500,000-line DuckDB embedded database management system but in a different language (I forget which! Sadly, not Lisp). It seems that for a complex project like this, the only people who can tell AI what to do are those who could do it themselves if they had to.

Continuing Carnegie-Mellon’s tradition as “the useful place in CS” (CMU gave us the Mach kernel, for example, which is inside nearly all Apple products), Graham Neubig talked about his experience building and using OpenHands, a system a little like Google’s Antigravity in that you can tell your “agents” to write software for you and the editor connects to the LLM of your choice. Prof. Neubig demonstrated using OpenHands to build a web site for the MIT event and the results were impressive!

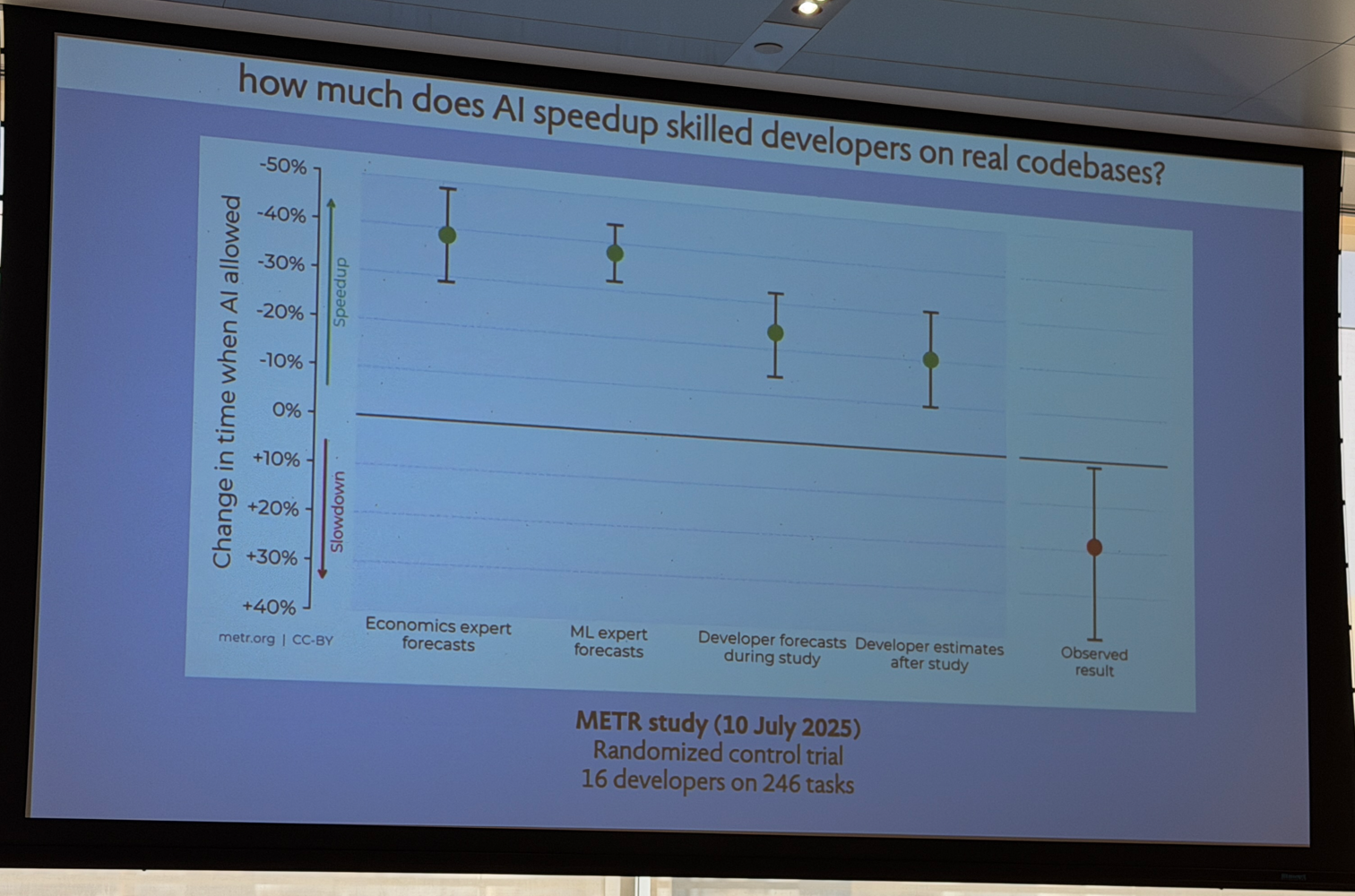

How good are LLMs in practice? Contrary to my own experience where LLMs are amazing at diving into huge legacy codebases and telling the human “these are the relevant files”, AI felt good but actually slowed human programmers down:

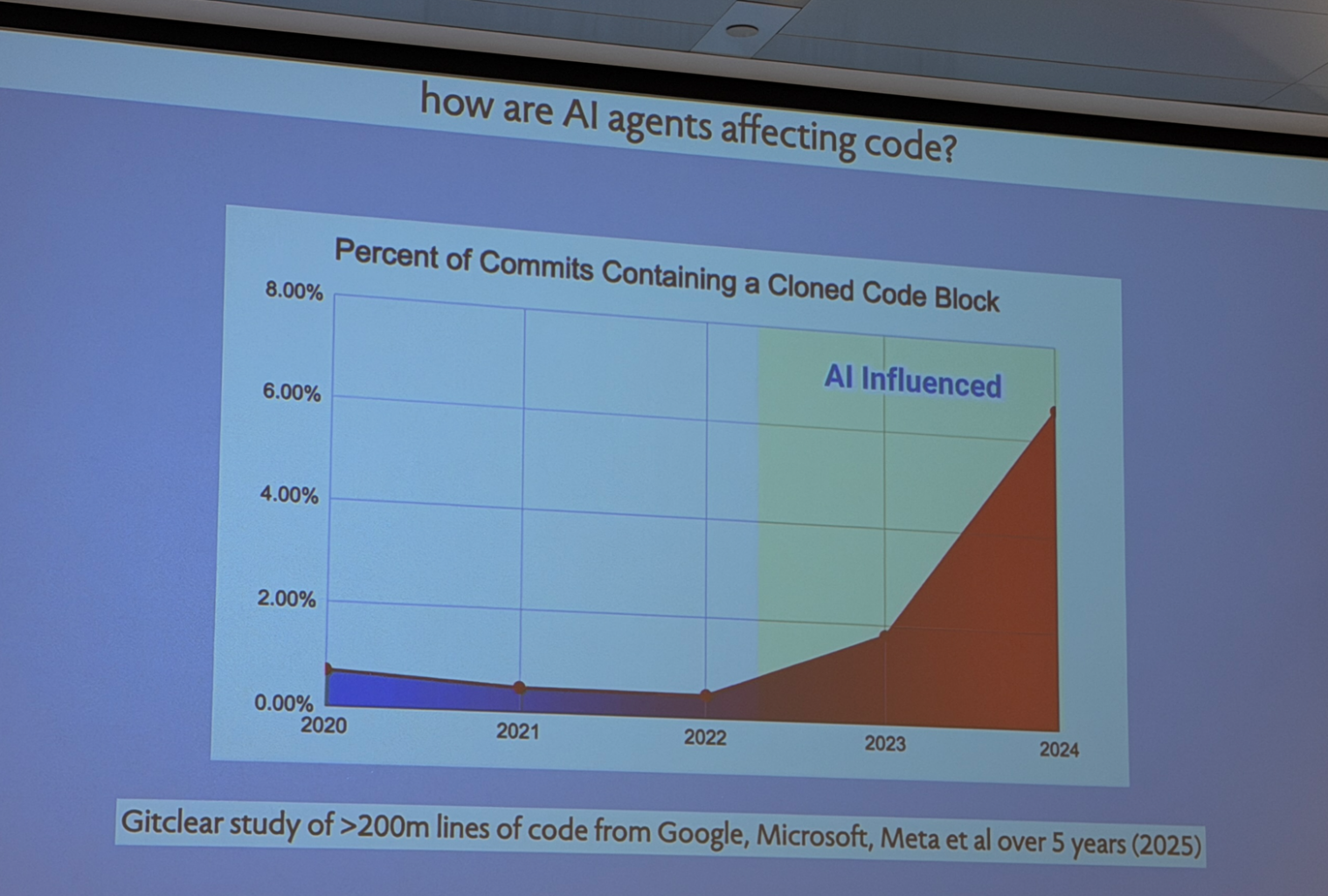

AIs cut and paste like crazy, eventually producing code with so many duplicate blocks of code that only an AI will be able to make a change consistently through a real-world system:

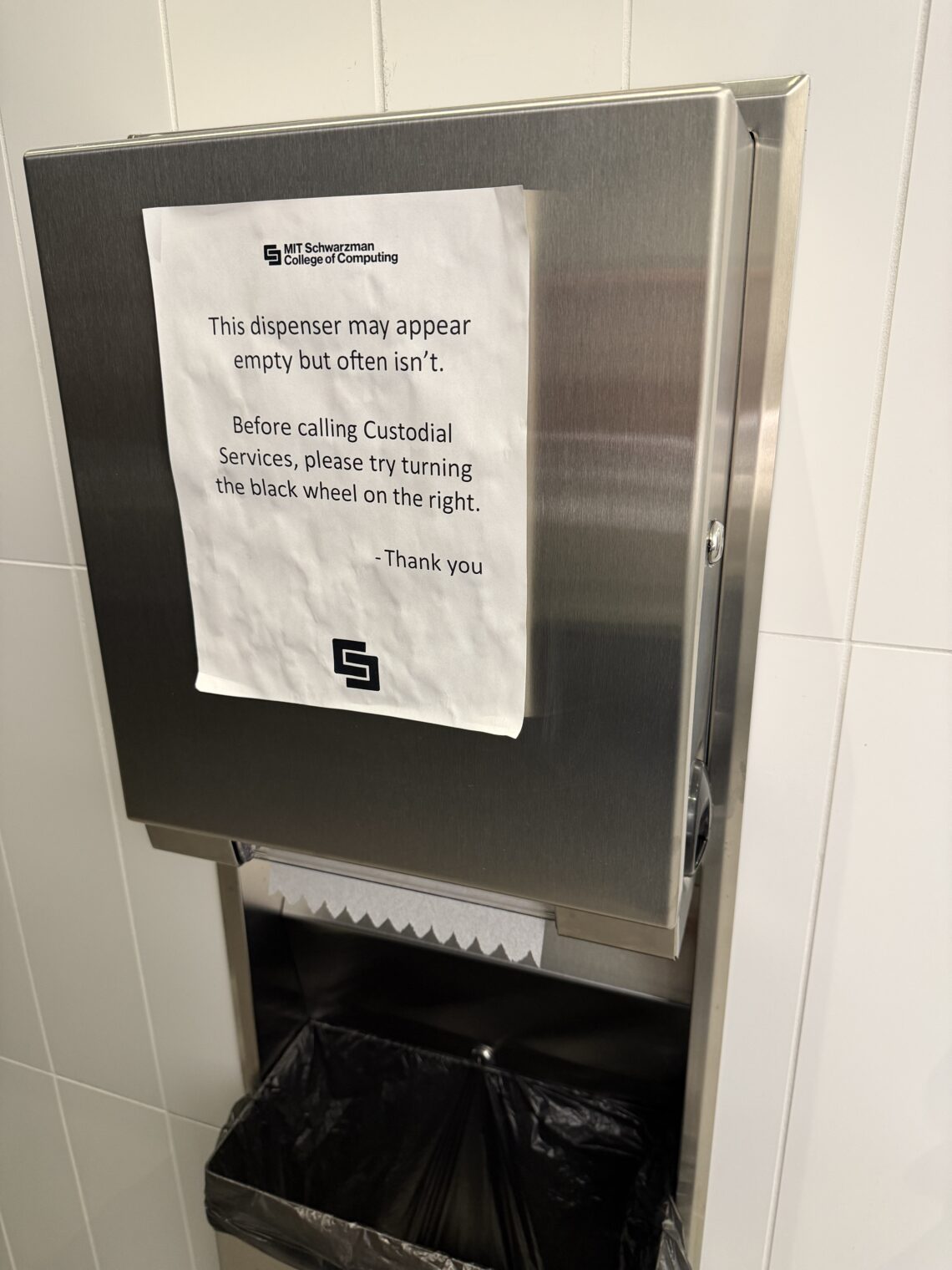

I enjoyed the bathroom break. The smartest humans on the planet need a lot of coaching for the operation of sophisticated machinery:

Based on the period products in what was labeled a “men’s” room, the world’s smartest people are going to struggle with the “What is a woman?” question:

Speaking of bathrooms, the ground floor restroom signs are already falling apart in the nearly-new building. Fortunately, the sacred word “inclusive” hasn’t been marred.

Towards the end of the day, Varun Mohan showed up via Zoom to make the academics look like fools. While they were dithering to get a few papers published and secure a lifetime guarantee of employment at a wage that is 1/50th of what a receptionist at NVDIA earns, an apparent teenager glued together a few open-source developer tools and added LLM integration to create Windsurf, which Google then acquired in a non-acquisition for $2.4 billion. The result is Antigravity (see Antigravity as web developer (AI in an IDE)).

What nobody could offer at the event: A clear explanation of what skills made a person a good software developer in the Age of AI and, therefore, what an undergraduate CS program should teach. On the other hand, the slides did offer a clear picture of what a typical human software engineer looks like: female and, usually, non-Asian.

For the haters who say that there is no “science” in computer science… I learned about Science starting during the walk to the event. The hardware store in Inman Square’s most prominent sign:

Not Science-related, but I love seeing Black Lives Matter signs and here a commercial property owner had devoted a huge amount of space to one. (See Replacement of Black workers by migrants in Cambridge, Massachusetts for how the city’s merchants have kept the signs and discarded the people.)

Of course, there were the sidewalk maskers:

And the cycling maskers (note the filthy snow and trash in the background):

In the MIT event space there were 6 people sitting in front of me, 3 of whom were masked for the entire day. Here are a couple of them: I’m not sure that I understand the rationale for

The next day at the Harvard Art Museum, Arthur M. Sackler section (don’t forget that before developing ties to Jeffrey Epstein, Harvard was entwined with the Sackler family), an apparent couple in which one person doesn’t wear a mask while the other does. This has always mystified me. Partner 1 is protected by his/her/zir/their mask so only Partner 2 gets infected by SARS-CoV-2 at the public venue. Then they go home and, without masks, share a confined space for days during which time the virus can trivially hop from Partner 2 to Partner 1.

Also a mystery: the person who is afraid of catching a respiratory virus and chooses a job with guaranteed exposure to hundreds or thousands of strangers each day. The mask is great protection, I’m sure, but wouldn’t it be far safer to wear the mask while working in a regular office or warehouse with just a handful of other employees nearby?

Maybe one day an LLM will be able to explain these choices?

Disclosures: I’m on Perlis’ side (and Dijkstra’s, of course). No AI was used in creating this content, except by Knuth.

> an apparent teenager glued together a few open-source developer tools and added LLM integration to create Windsurf, which Google then acquired in a non-acquisition for $2.4 billion.

Fans of western novels (Louis L’Amour did a lot of “cut and paste”, too) know that the real money is in picks, sluice boxes, and $100/lb. flour during a gold rush. (As an ersatz entrepreneur, I often wonder what is beyond the current hype. Gathering and recycling all the incalculably valuable human minds littering the planet?) The largest jackpot in America was a $2B payout in Powerball, given the number of people doing AI stuff, playing the lotto might be just as good of a strategy. Academics might be “fools” if the only point in living is making money. I highly recommend this course for MIT-level dummies about machine learning:

https://ocw.mit.edu/courses/18-065-matrix-methods-in-data-analysis-signal-processing-and-machine-learning-spring-2018/

I wonder what deal Strang would have taken. No linear algebra and $2.5B or decently wealthy and helping top minds discover the underpinnings of engineering mathmatics? Interestingly in his discussion with Wolfram I mentioned yesterday, Knuth said, regarding AI research:

> I myself shall certainly continue to leave such research to others, and to devote my time to developing concepts that are authentic and trustworthy. And I hope you do the same.

We ignore Knuth at our peril. Speaking of Turing, one of Knuth’s AI questions was:

Knuth >>> 16. What did Winston Churchill think of Alan Turing?

ChatGPT >> 16: There is no definitive answer to what Winston Churchill thought of Alan Turing, as the two men did not have a close personal or professional relationship. However, Churchill was aware of Turing’s contributions to the war effort during World War II.

Knuth > #16. Another mission apparently well accomplished yet fake. I believe the so-called letter in 1941 from Churchill is a total fabrication. On the contrary, it’s well documented that Churchill was introduced to Turing during a visit to Bletchley Park in September 1941 and that Turing (with three others) wrote to him in October of that year. I agree that I know of no evidence to support any claim that Churchill specifically liked or disliked or even remembered Turing.

And finally, the men’s and women’s bathrooms in our dormitories at my Ivy League school were always out of condoms. I don’t know if that is good or bad, depending on the quantity stocked. I had walking pneumonia my first freshman semester, not sure a mask would have helped prevent that. AIDs was the big epidemic in the ’80s. Being a Mask Scientist, I always wear mine and never get sick. I guess it’s superstition, like looking both ways before crossing the street or trusting that AI isn’t lying or tripping.

If only the right comment could get someone hired for $2.4B as the leader of a top blog commenting LLC.

Don’t give up on your dream, lion. My blog comment business is going to bill by the word. The iFart application made millions, when my comments get to that level and I get my early-adopter users, I’m going to incorporate my LLC.

(Anyone else notice that work productivity seems to be demonstrated by how much noise the worker makes? Stockers slamming boxes, workers with trailers slamming the ramp to the street, delivery drivers destructively testing truck door hinges then dropping the box on your porch? I haven’t been able to get a damn thing done today.)

> Anyone else notice that work productivity seems to be demonstrated by how much noise the worker makes?

Much like AI and Bitcoin. I’ve noticed that this phenomenon has been somewhat correlated with the rise of ChatGPT. My inner conspiracy theorist wants to believe it is an observable ripple in The Singularity headed our way. What fun new modes of failure are there going to be?

What should undergraduates be taught? Get selected by Peter Thiel or other VCs, for early stage funding and a guarantee of a billion dollar exit!

Looking into Windsurf AI, it looks like they had around $80mil in revenue, definitely no profit and loosing money, yet they are suddenly valued at $3 billion?

What did they invent that is of such high value? All the silicon valley companies in the 70s and 80s took decades to grow value and they actually had to produce value and generate profit, now these new companies do it in a couple of years? This does not make any economic sense.

So what is really going on? Lets follow the money trail. The initial funding for these high value exits comes from VCs, they will be the first to get paid on an exit before any of the founders. The buyout is from Google, which has a market cap of $3.68 trillion, so $3 billion is spare change, they will recover when the stock goes up in value from adding Windsurf AI “talent” which they will make sure to let their institutional investors to buy more or miss out on the AI boom. The question is who provides all the money for the Google stock growth, the pension funds, asset managers, insurance companies and wealth funds?

This is all one big boiler room operation, that has to keep growing, otherwise the wealthy would loose a significant amount of their wealth and power. It is very difficult to make money by running a “classical” business that makes a little bit of profit and takes decades to grow, it is much easier to have a machine that just prints money with questionable real value to society.

Interesting insight. Keep in mind that Google raised billions by releasing 100 year bonds to finance AI. That is a very optimistic position.

Marketplace on NPR had a piece on last night about when the economy is going to see gains due to AI. The answer, it is “murky”, likely a decade or more, when AI is ubiquitously embedded in our society. (When, not if.) I for one, welcome our new AI overlords and more money in my pocket, I just need to trust.

https://www.marketplace.org/story/2026/02/18/ais-effect-on-labor-productivity-is-murkier-than-you-might-think

My late Uncle sited air defense radar systems for the U.S. Air Force in West Germany and knew a bit about these newfangled computing machines. His advice to us lads was to avoid getting into programming as these machines would soon be self-programming, obviating the need for us bit-stained coders…

One of the pernicious effects of the whole COVID thing is that it has made people think face masks are there for their OWN protection. Face masks are used by surgeons to protect patients in sterile operation rooms. If someone has a cold and feels well enough to go about with some concerns about being infectious, a face mask does make sense. Nevertheless, I suspect that using a face mask is just a sign of germophobia and hypochondrias, not a sign of care for third parties. Using a face mask while cycling to protect oneself from pollution is also a sign of not having done basic fact checking.

F: it’s a great hypothesis about surgeons and masks, but tough to verify via experiment: “overall there is a lack of substantial evidence to support claims that facemasks protect either patient or surgeon from infectious contamination” https://pmc.ncbi.nlm.nih.gov/articles/PMC4480558/

> sign of germophobia and hypochondrias

[Raises hand.] My doctor accused me of germophobia and hypochondria. “Oh, I didn’t know I was seeing Howard Hughes today,” she said when she saw my mask. I draw a big smile on it in sharpie to hide my usual scowl (its real purpose).

> If someone has a cold

What’s that like? It’s been so long since I had one. It’s actually dangerous to wear one here in Trump country, they might stomp me, but I’m a committed masker and Contrarian. Don’t tread on my mask, citizen.

“It seems that for a complex project like this, the only people who can tell AI what to do are those who could do it themselves if they had to.” – Banger!

> https://philip.greenspun.com/blog/wp-content/uploads/2026/02/image-25.png

LOL! Personally, I am not surprised.

> Randomized control trial

More surprising. Finally, science!

I had a nice bonding moment this weekend with Claude AI. I wrote a fairy tale for my nieces, and when fed the story, it really did get the point (a retelling of “The Ugly Duckling”, where the lil swan knew she was a cute shorty who temporarily mistook an ugly boy parrot for being dapper.). It didn’t recognize that it was a rewrite, though. It even gave a nice moral: “Sometimes we must wait for others to grow into understanding.” I responded, “Including humans about AI.” It said “That is a profound observation.”

That’s very cool!

> It didn’t recognize that it was a rewrite, though.

I had thought on similar lines after skimming through a WSJ article about vending machines (couldn’t find a paywall free version):

https://www.wsj.com/tech/ai/anthropic-claude-ai-vending-machine-agent-b7e84e34

I had an intuition that it can’t detect dystopia. Say it reads something like 1984, it wouldn’t be able to reliably tell whether it’s a dystopia or not. When humans read 1984, the unease it leaves in the gut which makes us realize that it’s a dystopia might not be felt by an AI. I believe, even detecting if a person is a sociopath or not is kind of like this. The unease an interaction with them leaves in your gut mustn’t be felt by an AI.

@PF

> it reads something like 1984, it wouldn’t be able to reliably tell whether it’s a dystopia or not. When humans read 1984, the unease it leaves in the gut

In his critique of 1984, Asimov found 1984 to have technical issues which distracted from its credibility as a dystopia. My fellow software engineer said of my fable of birds:

> Ha! Nice demonstration of a NAK on an attempt to open a handshaking channel! (SQ)AWK!

easily passing the reverse Turing test and the Dilbert test.

> In his critique of 1984, Asimov found 1984 to have technical issues which distracted from its credibility as a dystopia.

Thanks for this! I will try to read it sometime.

That’s very cool!

> It didn’t recognize that it was a rewrite, though.

I had thought on similar lines after skimming through a WSJ article about vending machines (couldn’t find a paywall free version):

https://www.wsj.com/tech/ai/anthropic-claude-ai-vending-machine-agent-b7e84e34

I had an intuition that it can’t detect dystopia. Say it reads something like 1984, it wouldn’t be able to reliably tell whether it’s a dystopia or not. When humans read 1984, the unease it leaves in the gut which makes us realize that it’s a dystopia might not be felt by an AI. I believe, even detecting if a person is a sociopath or not is kind of like this. The unease an interaction with them leaves in your gut mustn’t be felt by an AI.

It’s not just AI, though. Many humans seem unable to identify the dystopic quality of so-called Smart TVs (they really are watching you), or as Orwellian as you can get, the WeChat-enabled surveillance society of “Communist” China.