Product Idea: AI-fueled web site crawler that highlights HTML/CSS failures

In working with John Patrick Morgan to see what capabilities today’s LLMs have for doing web page design and upgrades of existing web pages/sites, it occurred to me that there is a gap in the marketplace. As far as I can tell, there isn’t a good tool that a publisher can run after every CSS tweak that will crawl the entire site and look for pages that were uglified by the tweak, broken by the tweak, never responded to the tweak because they reference some other CSS file or no CSS file, etc.

There are plenty of tools that will look for changes to pages, but they consider every change to be a mistake. If the publisher has intentionally decided on a fresh new look for the site, these tools will flag every page on the site (could be tens of thousands or hundreds of thousands for a newspaper, for example) as being defective.

Gemini says that these are the best current options:

1. The Best “Set it and Forget it” Tool: Percy (by BrowserStack)

Percy is likely the closest to what you are asking for because it integrates rendering into the crawling process.

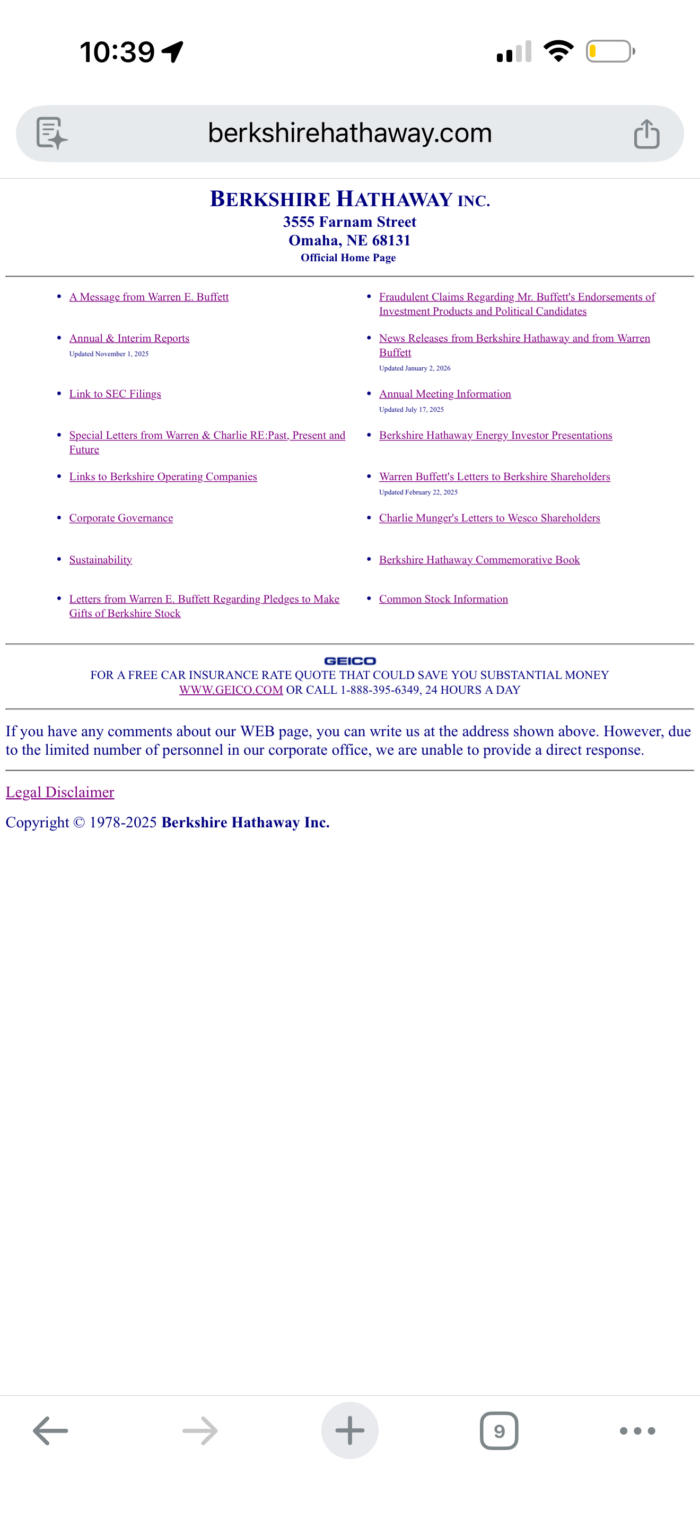

- How it works: You give it your sitemap. It visits every URL on both desktop and mobile (simulated iPhone/Pixel/iPad viewports).

- The “AI” part: It uses “Smart Diffing.” It ignores “noise” (like a pixel moving 1mm due to browser rendering differences) and only alerts you to actual visual changes (e.g., your navigation bar collapsing into two rows instead of one).

- Why for you: It handles the “entire site” requirement well if you feed it a list of URLs or a sitemap.

2. The “Smartest” AI: Applitools Eyes

Applitools is the leader in “Visual AI.” It attempts to mimic the human eye and brain.

- The “AI” part: It is smart enough to know the difference between Content changes (a date changing from yesterday to today) and Layout disasters (text overlapping an image).

- Unique Feature: It can sometimes detect “broken” layouts even without a baseline if you use their specific “Layout Match Level” which looks for structural integrity, though it is primarily a comparison tool.

- Mobile: It uses the “Ultrafast Grid” to render your pages on dozens of real mobile devices in seconds.

3. The “Generic Brokenness” Finder: BrowserStack Responsive Checker

If you don’t want to set up baselines and just want a tool to crawl the site and show you screenshots of every page on an iPhone X, this is the tool.

- How it works: You enter your URL (or a batch of URLs). It spins up real devices in the cloud, loads your page, and takes a high-res screenshot.

- The downside: It doesn’t automatically “flag” errors (it doesn’t know that the image shouldn’t be there). You still have to scroll through the gallery of screenshots yourself, but it’s much faster than checking on your phone manually.

4. The Free / Open Source Option: BackstopJS

If you are comfortable with the command line and want a free solution you can run on your own machine.

- How it works: You create a

backstop.jsonfile with a list of your URLs. - The Workflow:

- Run

backstop reference(This crawls your current site and takes screenshots of every page). - Apply your CSS changes.

- Run

backstop test(This crawls again and compares).

- Run

- Result: It generates an HTML report showing you “diffs” (difference highlights) for every page that changed.

My Recommendation for Your Upgrade

Since you are retrofitting a legacy site, BackstopJS (if you have dev skills) or Percy (if you want a paid service) is the ideal workflow:

- Run a scan NOW (with the old CSS). This is your “source of truth.”

- Upload the new CSS.

- Run the scan again.

- The tool will instantly show you exactly which 5 pages (out of 100) broke because they had unique HTML that the new CSS didn’t account for.

Me:

You say that Percy will highlight changes to pages, but if the goal of a site redesign is to change the look of the site won’t that result in Percy flagging every page as dramatically different? What would be useful is a tool that flags only those pages that have been broken or disfigured by the change, not those that have simply changed from one style to another.

That is a very sharp observation. You’ve hit on the main “pain point” of visual regression testing: when you intentionally change the design, every page becomes a “failure” in the eyes of a standard pixel-comparison tool.

To solve this, the industry has moved toward Visual AI and Layout-Only testing. Here is how you can use tools to find “broken” pages rather than just “different” pages.

1. Applitools “Layout Match” (The Intelligence Approach)

Applitools is currently the leader in this. Instead of looking at pixels, their AI builds a structural map of the page.

- How it helps you: It has a “Layout” match level. This mode ignores changes in colors, fonts, and specific images. Instead, it checks if the relationships between elements are still healthy.

- What it flags: It won’t flag that your text is now blue instead of black. It will flag if your text is now overlapping an image, if a column has dropped to the bottom of the page unexpectedly, or if a button is partially hidden.

- The Workflow: You run it once on your old site to let the AI learn the “structure” of your content, then run it on the new site.

2. Galen Framework (The “Rules-Based” Approach)

If you want something that specifically looks for “disfigured” pages without needing a baseline at all, Galen is a powerful open-source tool.

- How it works: You write a simple “spec” file that describes what a “correct” page looks like.

- Example: “Image should be above text on mobile,” or “Main container should never be wider than the screen.”

- Why it fits your goal: You aren’t comparing the new site to the old one. You are testing the new site against the rules of good design. If a page has a horizontal scrollbar or a margin that is 0px wide on a phone, Galen will fail that specific page.

3. Percy’s “Grouped Diffs” (The Efficiency Approach)

Percy knows that a CSS change will break everything. To keep you from losing your mind, it uses Diff Grouping.

- How it helps you: If you change the global

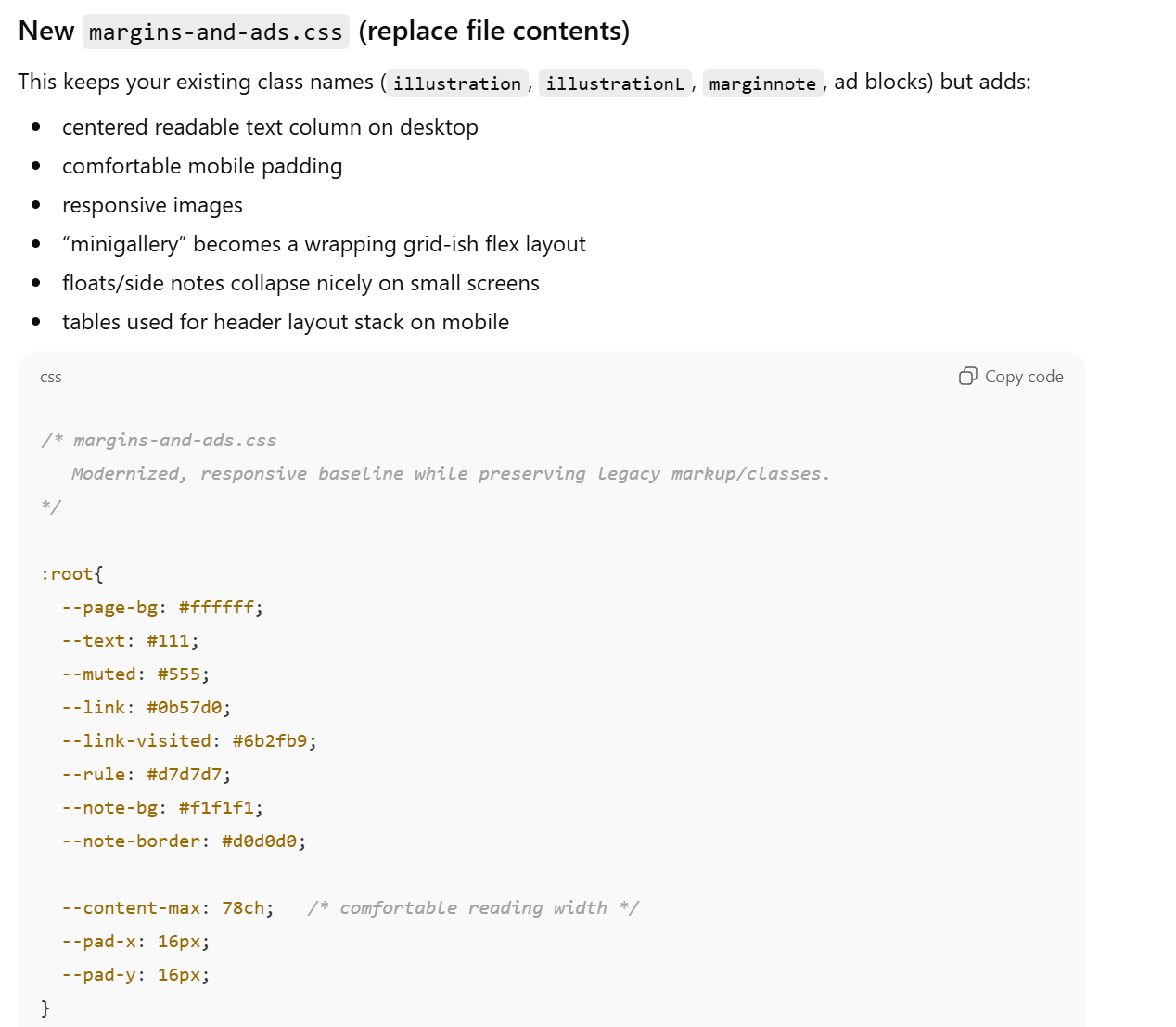

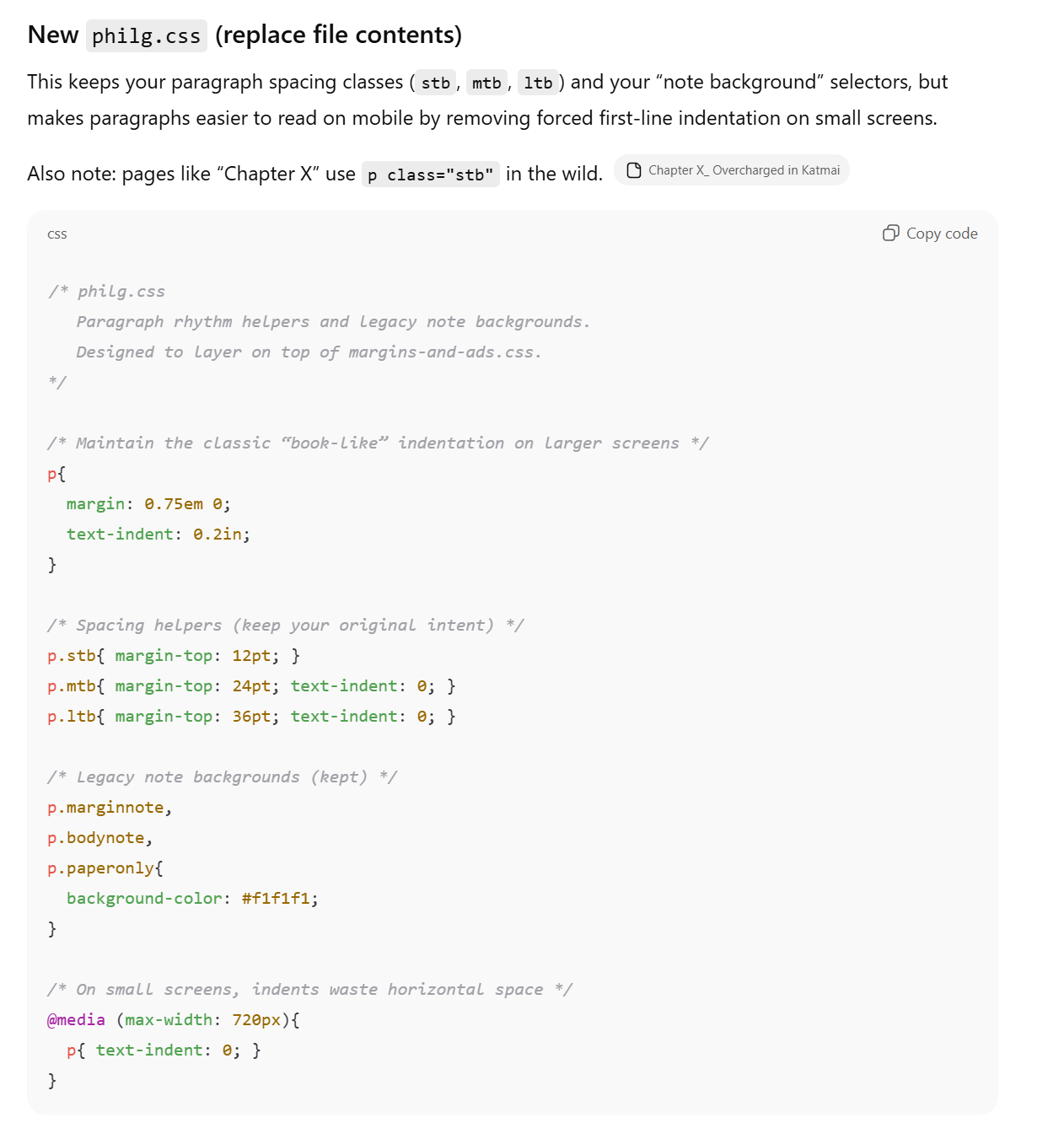

margins-and-ads.cssand it shifts the header on 400 pages, Percy will group all 400 pages into a single “change set.” - The “Broken” Finder: You look at the first page in the group. If it looks “correct” in its new style, you hit Approve All. If 398 pages look great but 2 pages look “disfigured,” the AI often detects that those 2 pages are “outliers” (different from the rest of the group) and flags them for individual review.

4. “VisualEyes” (User Attention AI)

This is a slightly different type of AI. It doesn’t look for broken code; it looks for broken UX.

- How it works: It generates “Attention Heatmaps” based on your new design.

- Why it’s useful: If your CSS change accidentally makes a “Google Ad” look more prominent than your “Navigation Menu,” the AI heatmap will show a massive red blob over the ad. It flags pages where the visual hierarchy has been “disfigured” in a way that will confuse a human.

Summary Recommendation:

If you want a tool that “just knows” a page is broken:

- Applitools (Layout Mode) is the most “AI” way to ignore style changes but catch structural breaks.

- Google Search Console / Lighthouse (Free) is the simplest “No-Baseline” way. You can run a Lighthouse “Accessibility” and “Best Practices” audit on your site; it will automatically flag “Touch targets too close together” or “Content wider than screen”—the two most common ways a mobile site is “disfigured.”

[after a follow-up]

By default, Google Lighthouse does NOT look at your entire site.

If you open Chrome DevTools and click “Run Audit,” it only analyzes the single specific page you are currently looking at.

However, because you are dealing with a site-wide CSS redesign, you have three ways to handle this depending on how much “manual work” you want to do:

1. The Manual Way (Single Page)

If you use the standard Lighthouse tool (in Chrome or via PageSpeed Insights), you have to enter every URL one by one. This is useful for testing your “templates” (e.g., check one article page, one gallery page, and the home page), but it won’t find a broken layout on a random page deep in your archives.

2. The “Semi-Automatic” Way (Unlighthouse)

There is a popular open-source tool called Unlighthouse that specifically solves your problem.

- What it does: You give it your homepage URL. It crawls your entire site, finds every link, and runs a Lighthouse audit on every single page automatically.

- The Result: It gives you a dashboard showing a “Score” for every page. If your new CSS makes a specific page’s “Accessibility” or “Best Practices” score drop (for example, because text is now overlapping or a button is too small), it will stick out like a sore thumb in the list.

3. The “Google Search Console” Way (Field Data)

If your site is already live and verified in Google Search Console, Google is actually already auditing your site for you.

- Under the “Experience” tab in Search Console, look at “Core Web Vitals” and “Page Experience.”

- Instead of a lab test, this shows you real-world data from users. It will group your pages into categories like “Poor,” “Needs Improvement,” and “Good.”

- The Catch: This data is not instant. It takes about 28 days of real user traffic to update, so it’s better for long-term monitoring than for checking a CSS change you made ten minutes ago.

Which should you use?

For your CSS project, I recommend Unlighthouse.

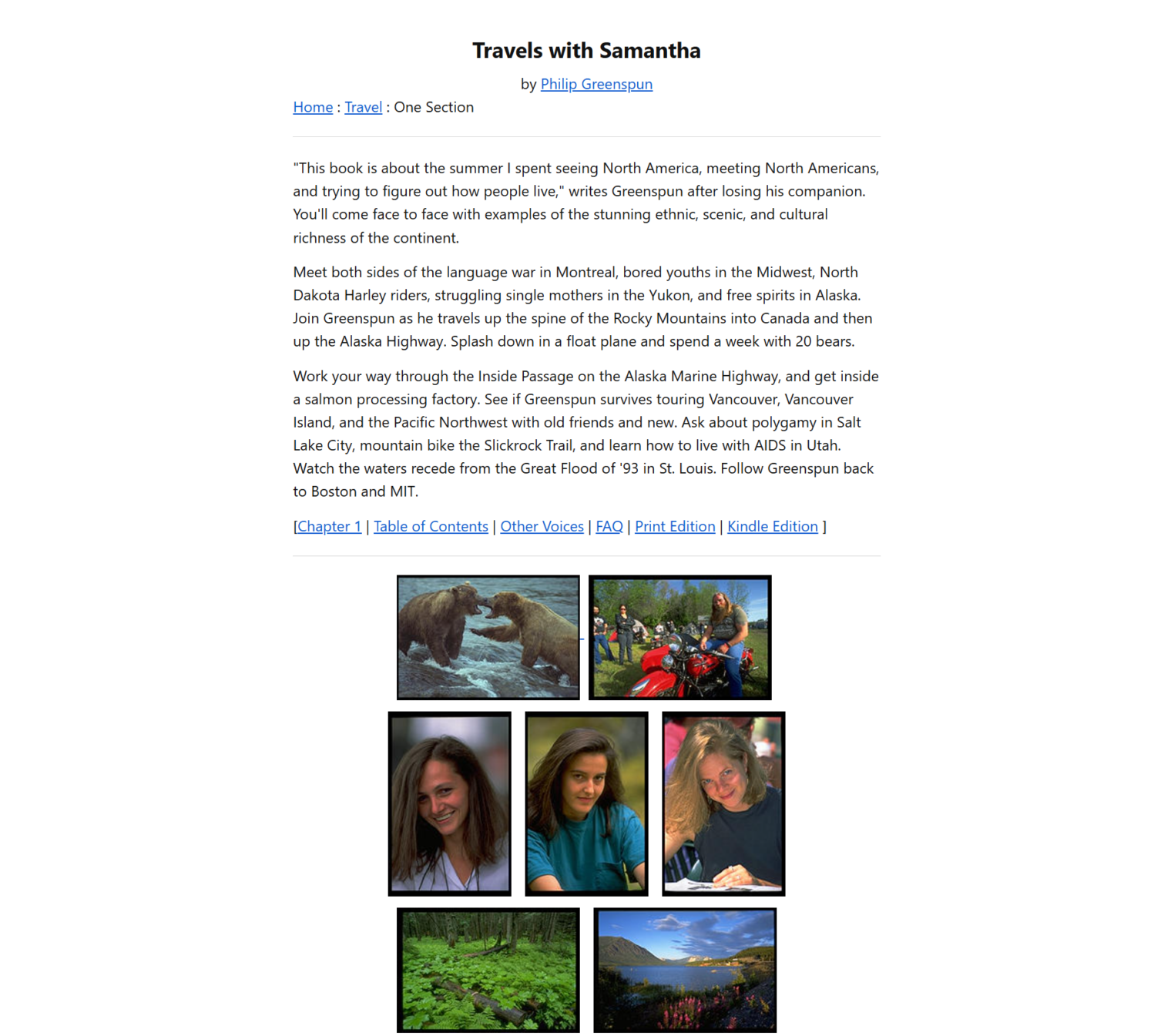

Since your site appears to be a collection of legacy HTML files with a shared CSS, you likely have “patterns.” Unlighthouse will let you see if your CSS change worked for the 1996 articles as well as it did for the 2007 articles by showing you a side-by-side gallery of screenshots for every page it finds.

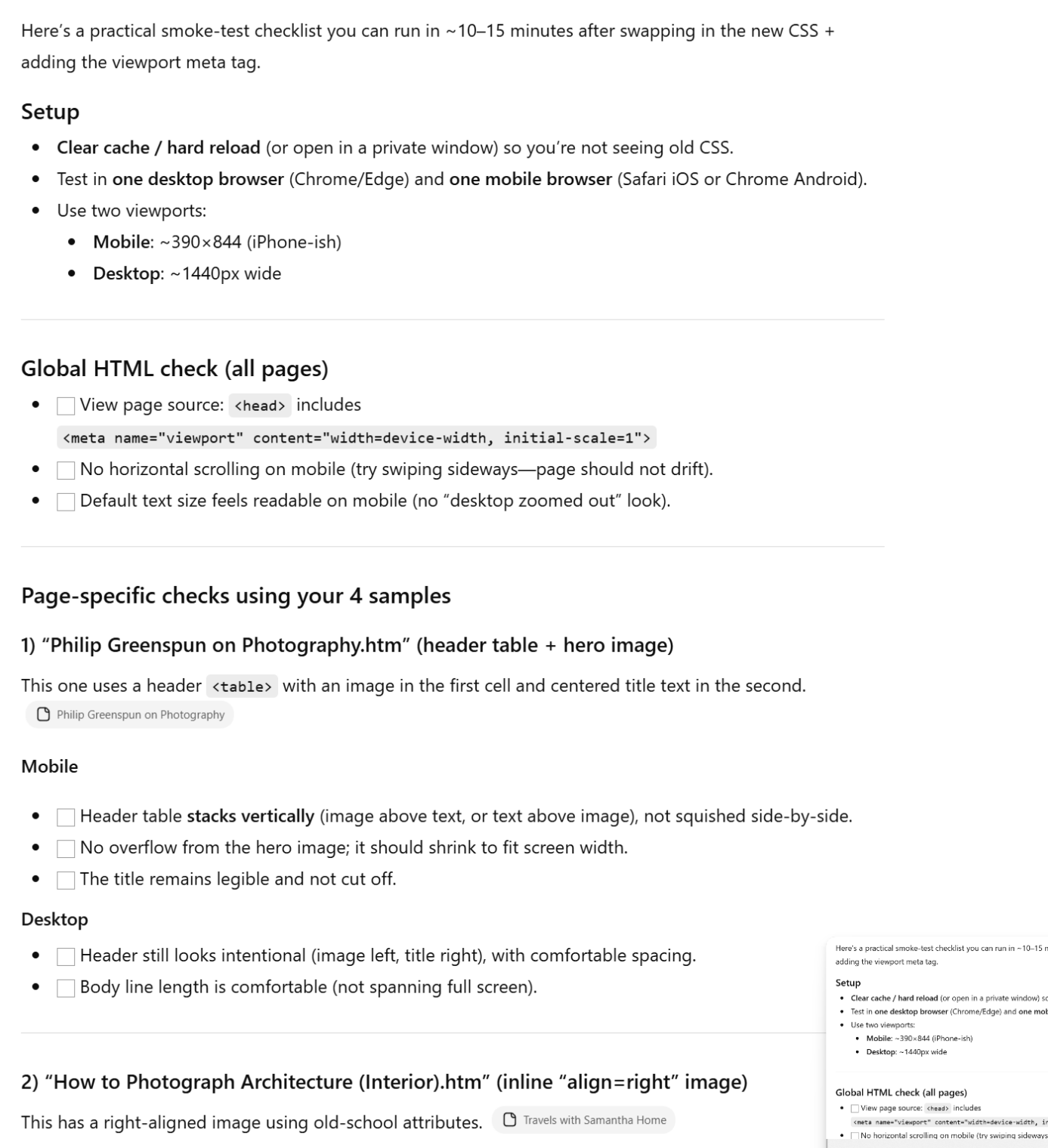

Summary Tip: If you want a quick check without installing anything, run Lighthouse on your three most different pages (e.g., a text-heavy page like “Chapter X,” a photo-heavy page like “Architecture,” and your Home Page). If those three pass the “Mobile Friendly” test, 90% of your site is likely safe.

Full post, including comments