AI competition for the redesign of a site that already has CSS

In our last post, John Morgan and Philip Greenspun compared four LLMs to see how they would do on redesigning the Berkshire Hathaway home page, an HTML relic of the 1990s untainted by CSS. Today we’ll give AI a tougher challenge: redesign the philip.greenspun.com site from four sample HTML files and the CSS that is referenced by them. The contenders are ChatGPT, Grok, Gemini, and Claude.

The Prompt

I want to update the CSS on a web site so that it renders nicely on mobile and, ideally, has an improved look on desktop as well. I’d like to not make too many changes to the HTML, though I could add a viewport meta tag in the head of every HTML file, for example. I’m going to upload four sample HTML files from this site and two CSS files that are referenced (margins-and-ads.css is the one that nearly all pages on the site reference). Please send me back new CSS and any changes that you think would be worth doing on all of the HTML pages on the site.

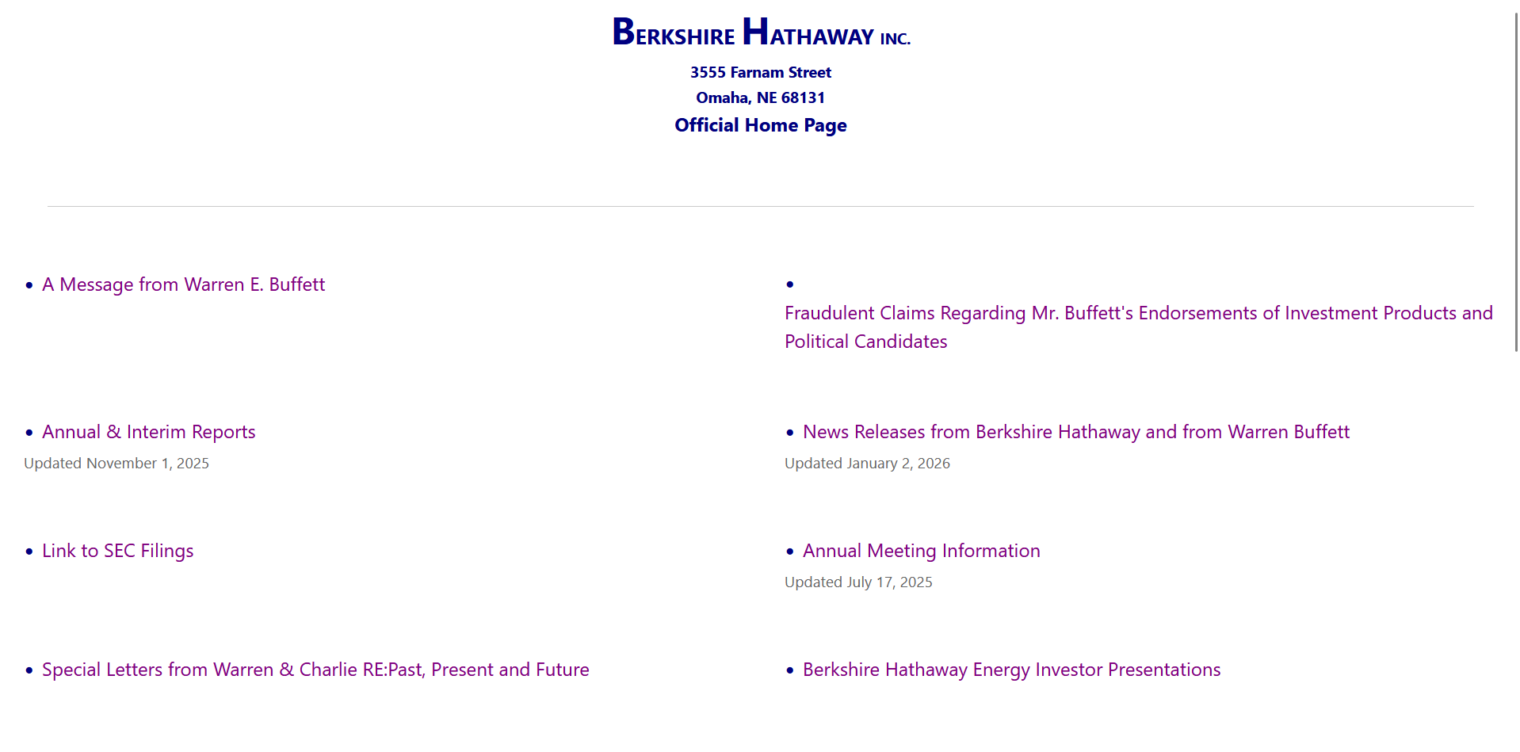

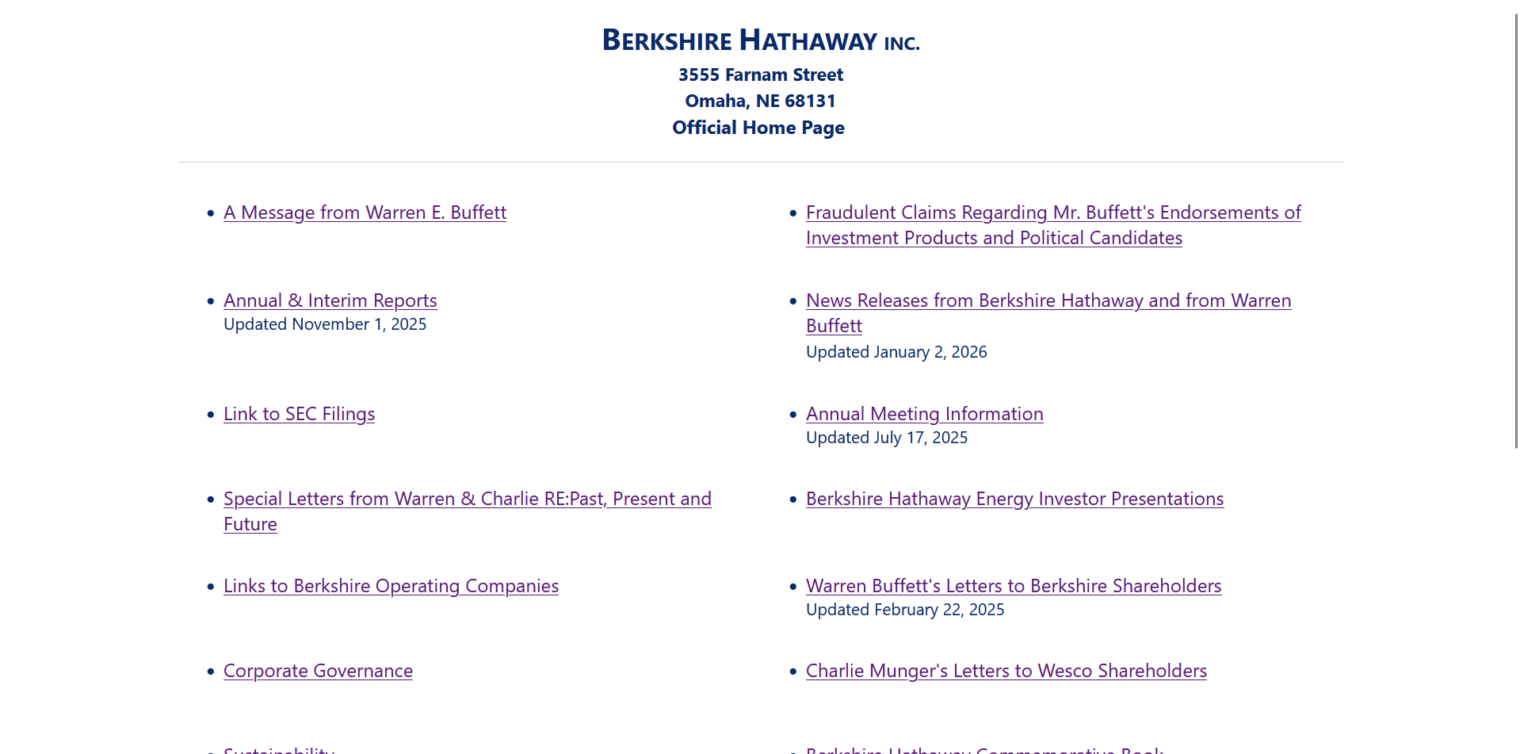

Sample Pages on Desktop Before (Chrome)

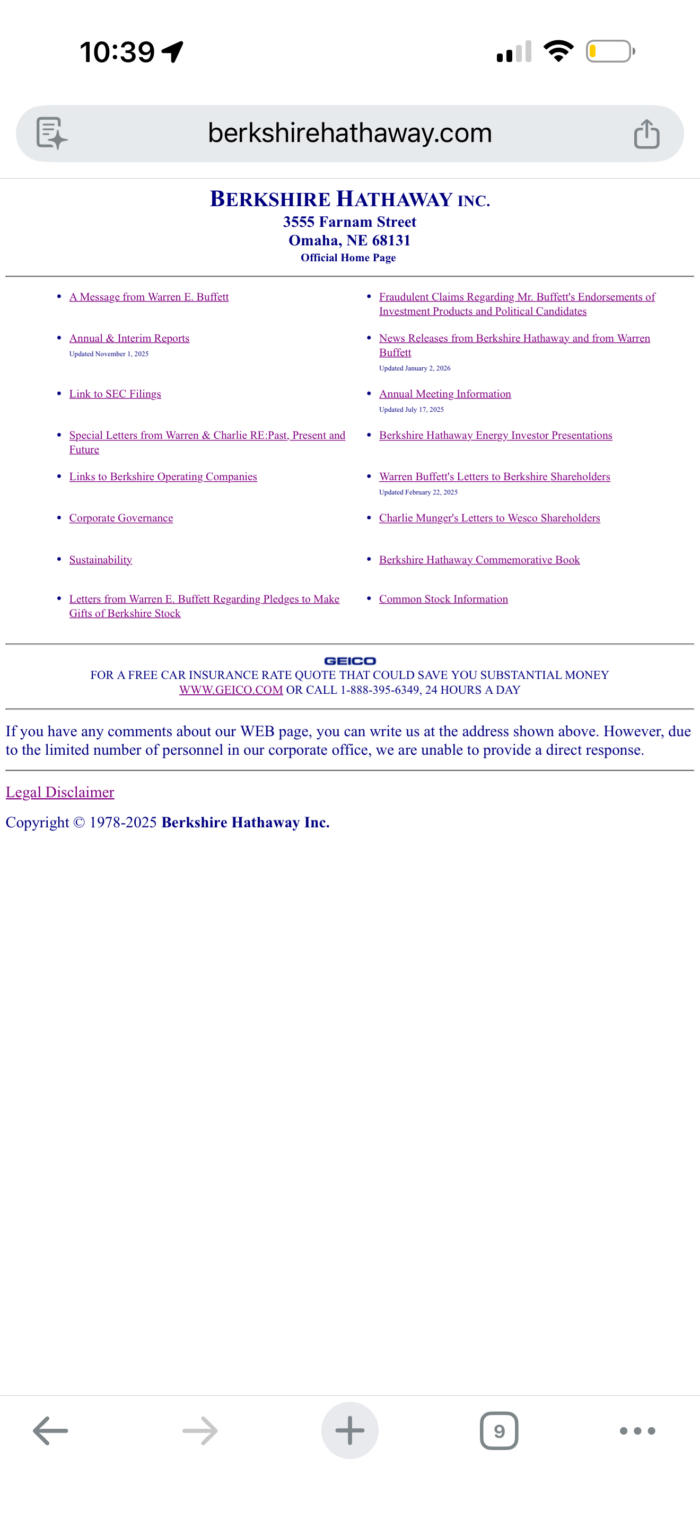

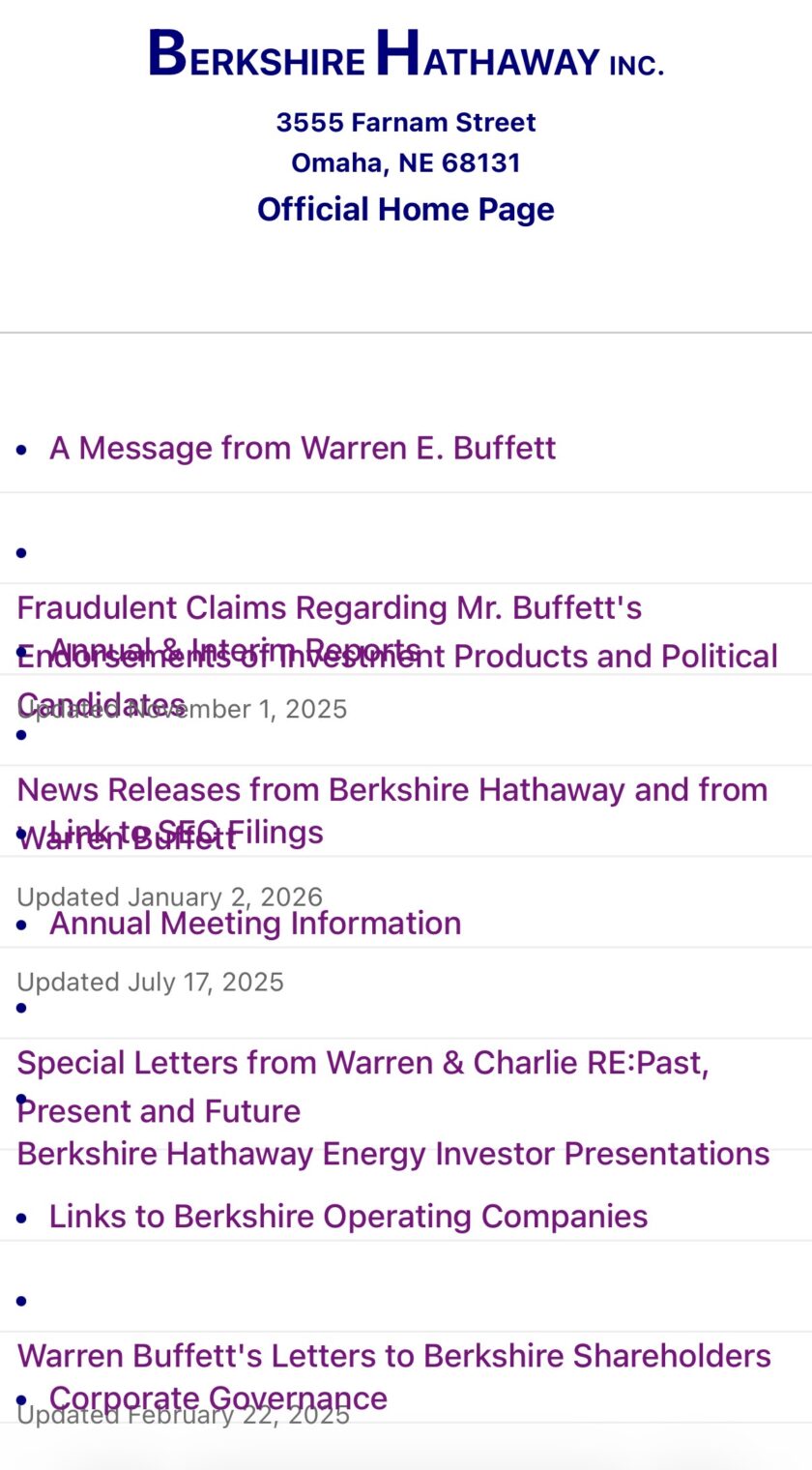

Sample Pages on Mobile Before (iPhone 17 Pro Max, Chrome)

Comment from Philip: I’m grateful to John for not looking at these and asking, “Did you think that mobile web browsing was a fad and would go away?” (on the actual device they all are absurdly small and hard to read)

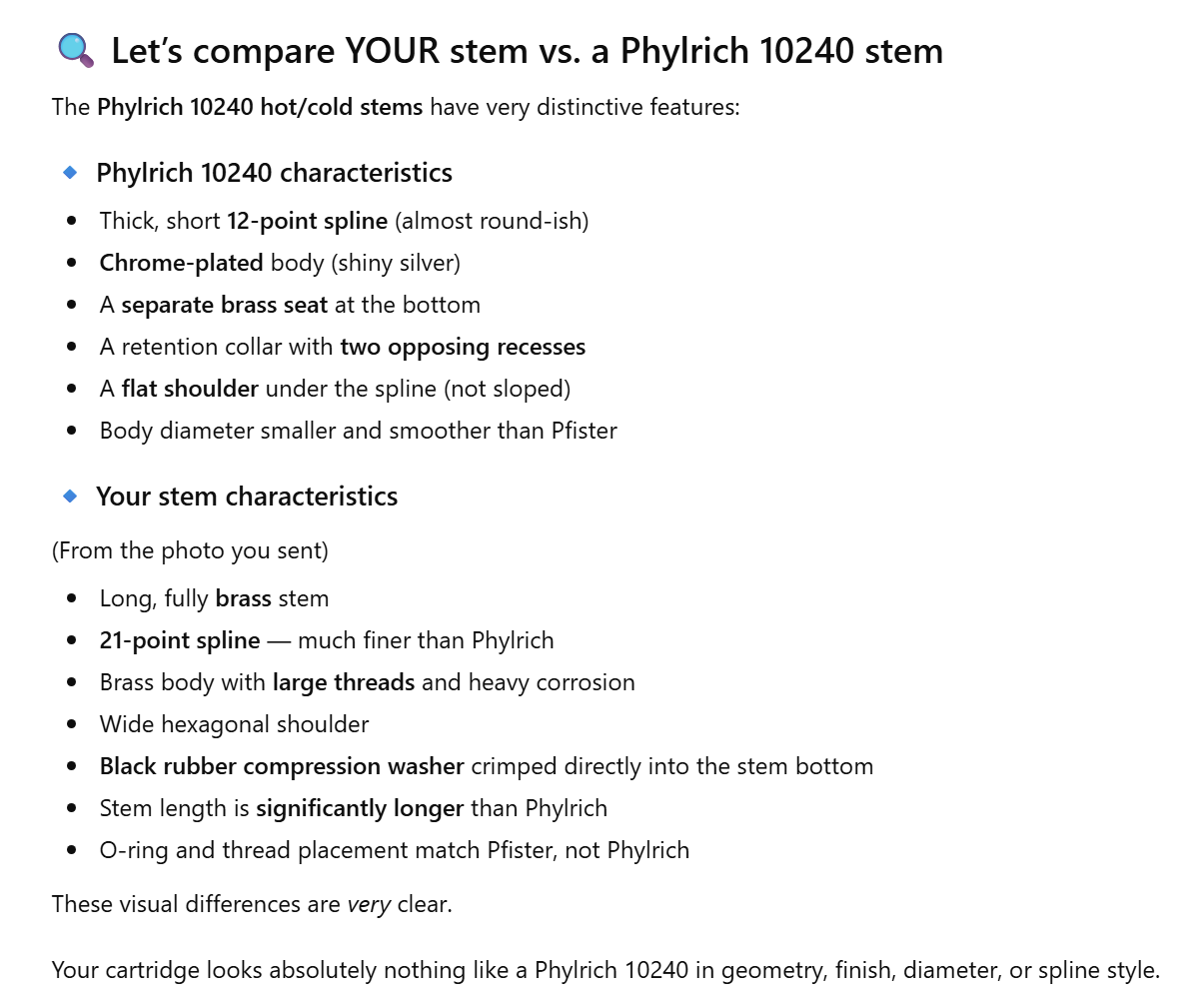

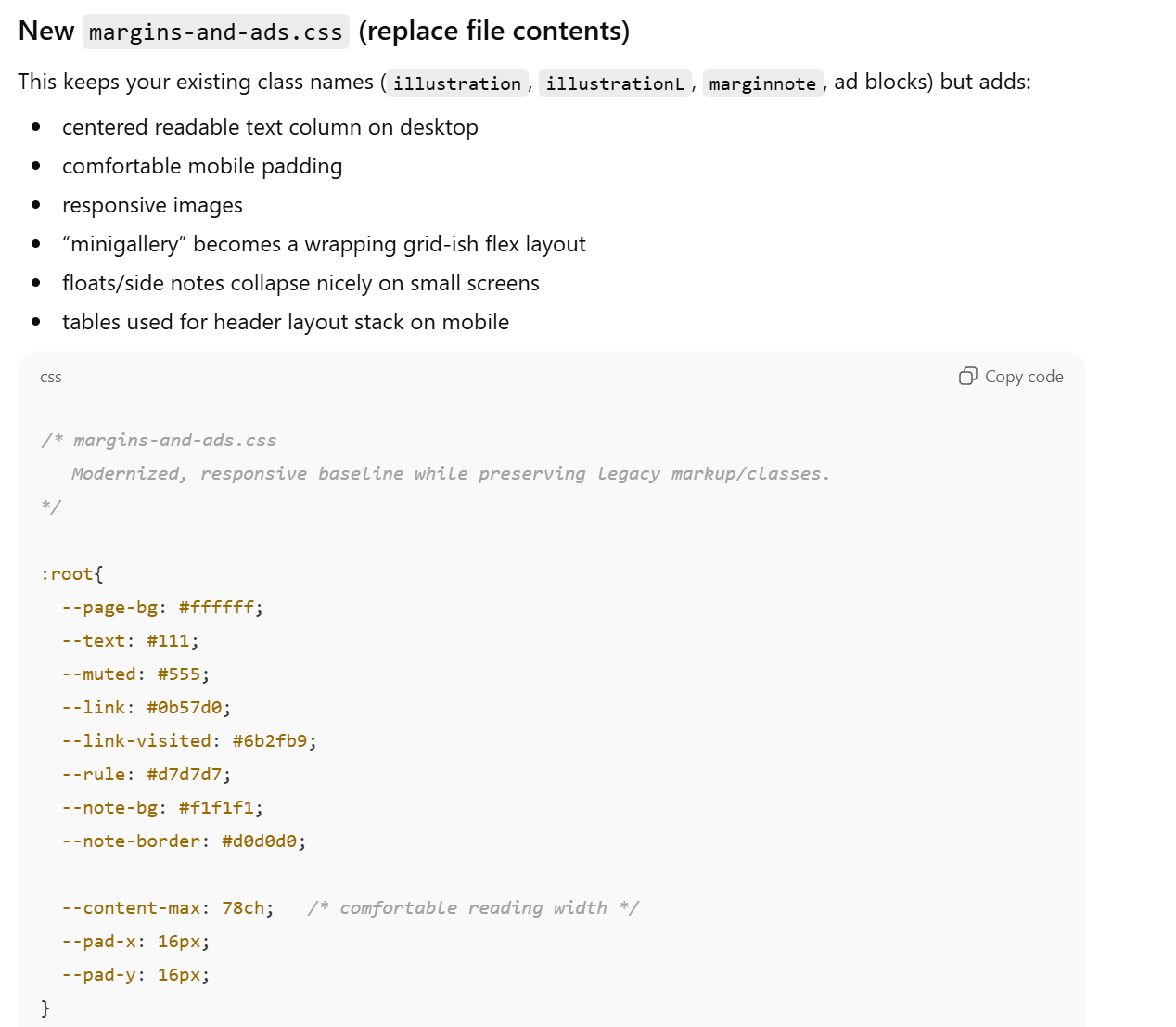

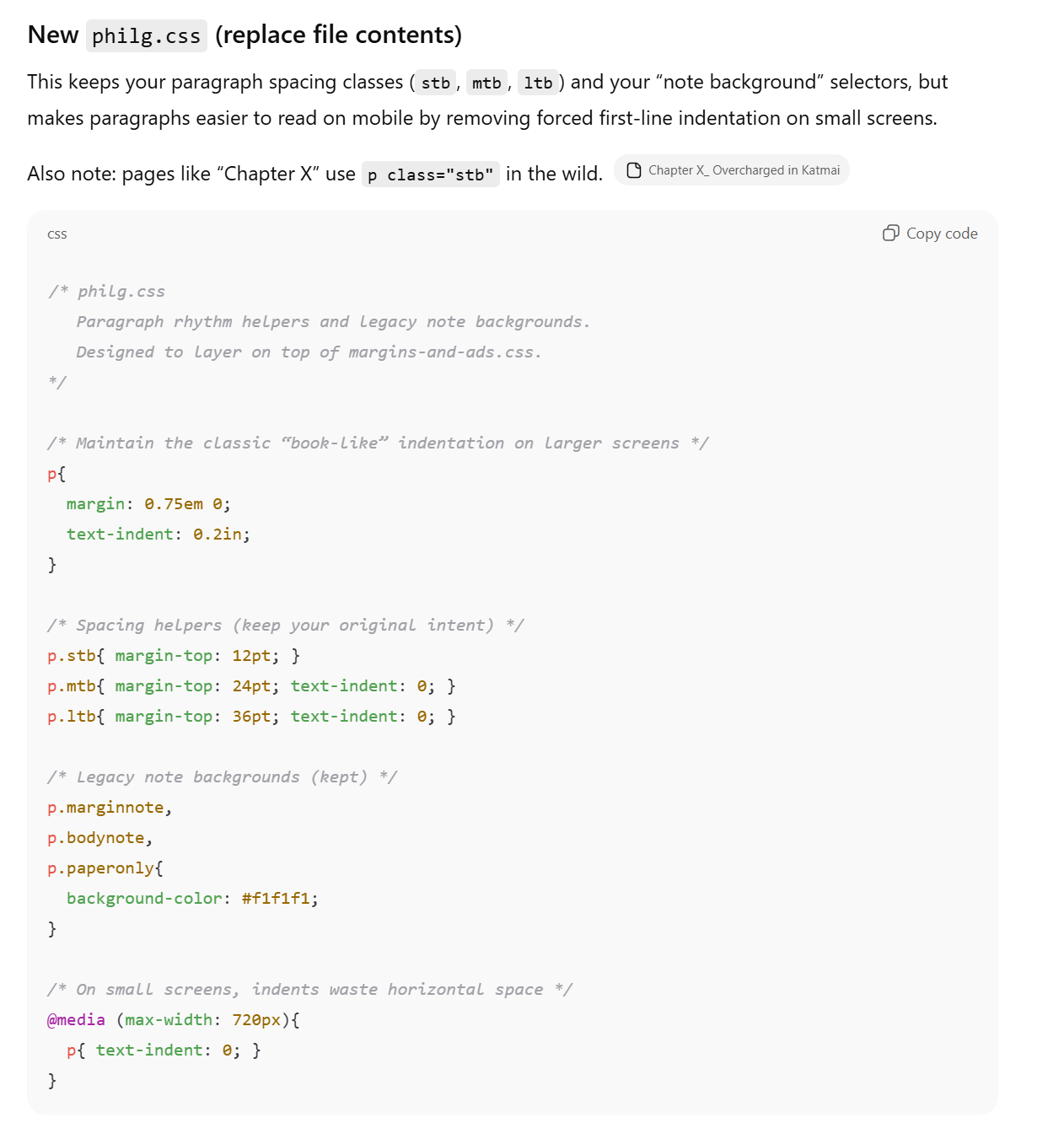

from ChatGPT 5.2

Below is a “drop-in” modernization that keeps your existing HTML working, but makes it responsive on phones and nicer on desktop—without needing structural HTML changes.

It’s designed around what I see in your samples:

- Most pages pull in

margins-and-ads.cssand currently just set big left/right margins plus floats for images/ads/notes. margins-and-ads - Some pages also include

philg.cssfor paragraph spacing/indents and note backgrounds. philg - Layout is often “classic HTML”:

<body bgcolor=...>,<center>,<table>for header layout, images withalign/hspace/vspace, etc. Philip Greenspun on Photography Travels with Samantha Home - Photo pages use

<div class="minigallery">…</div>with<br><br>breaks. How to Photograph Architecture … - Some pages include custom elements like

<photonetpage>/<photonetpagenav>and<product…>tags. How to Photograph Architecture … How to Photograph Architecture … -

p.marginnoteexists and is sometimes floated right at 25% width (bad on mobile). margins-and-ads

One small HTML change I recommend on every page

Add this inside

<head>

:

<meta name="viewport" content="width=device-width, initial-scale=1">

That alone fixes a lot of “tiny text / zoomed-out” mobile behavior.

(Your pages currently don’t have this viewport meta in the sample heads. Philip Greenspun on Photography Travels with Samantha Home)

Optional but nice (safe even with older markup):

<meta name=”color-scheme” content=”light”>

Why these changes help (mapped to your existing markup)

- Big fixed margins become mobile-friendly padding: your current

body {margin-left/right: 3em}can still be too wide or awkward on phones. margins-and-ads - Floats collapse on mobile: images with

align="right"and your.illustrationfloats (plus margin notes/ads) are the #1 cause of “narrow column” mobile misery. Philip Greenspun on Photography margins-and-ads -

minigallerybecomes usable: instead of relying on<br><br>breaks, it wraps naturally and centers on small screens. How to Photograph Architecture … - Layout tables stack: your header table (image + title) won’t force sideways scrolling. Philip Greenspun on Photography

- Custom tags display correctly:

<photonetpage>/<photonetpagenav>should behave like block containers. How to Photograph Architecture …

If you’re willing to do one more (still small) HTML improvement later

Not required, but high value over time:

- Remove

bgcolor/textattributes from<body>and let CSS control it. (Right now you set it in HTML on many pages. Philip Greenspun on Photography) - Replace

<center>blocks with CSS classes (centering via CSS is more controllable). Travels with Samantha Home

But again: the CSS above works even if you do none of that.

If you want, I can also generate a tiny “smoke test” checklist (pages to open + what to verify) based on these exact sample files (header table,

minigallery

,

marginnote

, and a long chapter page).

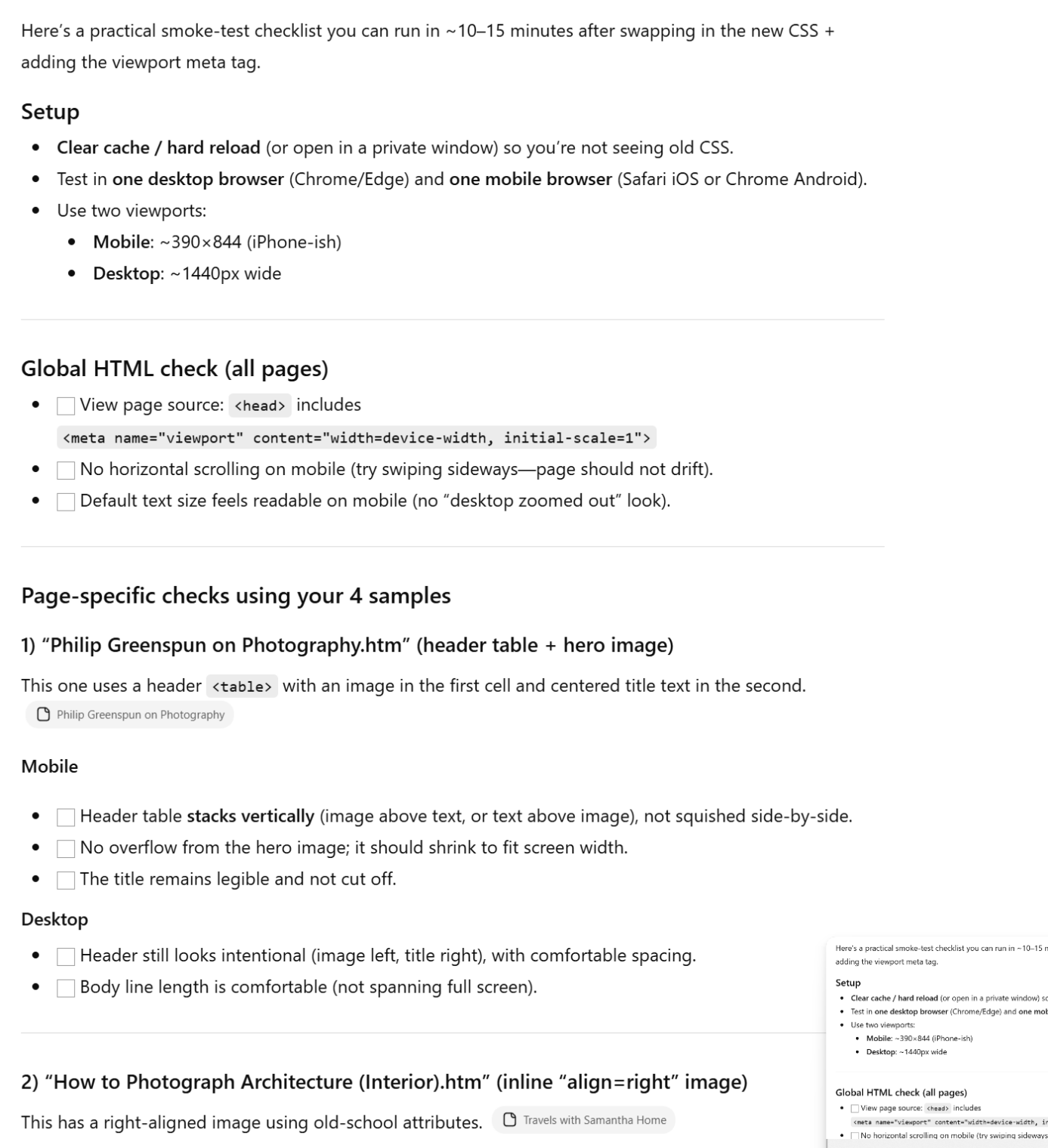

[after asking for the checklist]

After ChatGPT

A huge amount of screen space is wasted when the browser is opened at my usual width:

Maybe ChatGPT is right that this is about as wide as a column of text should be, but on a site without ads it doesn’t look right and denies the user the choice to get more content on his or her screen. My previous style sheets didn’t set a font, which enabled the browser to default to whatever the browser authors thought was best or whatever the user had chosen via customization (of course, it would be a rare user who’d take the trouble). ChatGPT has hard-coded in a sans-serif font. Asked why, ChatGPT explains:

But then it backpedals and says that maybe serif could play a role:

Why serif may actually be the right choice for your site

Now the counter-argument—and this matters for your content.

Your site is:

- long-form

- essay-like

- book-structured

- historically text-forward

- intentionally “print-ish” (paragraph indents, chapter structure)

That is exactly where serif shines, especially on desktop and tablets.

You’re not running a SaaS dashboard or news feed—you’re publishing durable prose.

My recommended compromise (best of both worlds)

Use serif for body text, sans-serif for UI and headings, with mobile-aware tuning.

Option A: Classic, conservative (very “Philip Greenspun-appropriate”)

body{

font-family: Georgia, "Times New Roman", Times, serif;

}

Then add (already supported by the rest of the CSS):

h1, h2, h3, h4,

photonetpagenav{

font-family: system-ui, -apple-system, "Segoe UI", Roboto, Arial, sans-serif;

}

This:

- preserves the essay/book feel

- keeps navigation and headings crisp

- avoids over-modernizing the site

Option B: Modern serif optimized for screens (my favorite)

body{

font-family: Georgia, "New York", "Times New Roman", Times, serif;

font-size: 17px;

line-height: 1.6;

}

Plus mobile tweak:

@media (max-width: 720px){

body{

font-size: 16px;

}

}

This gives you:

- excellent long-form readability

- no “tiny Times on iPhone” problem

- minimal aesthetic change from the original site

Option C: Keep sans-serif everywhere (only if you want a stylistic reset)

This is what I initially gave you, but I would not recommend it unless you’re intentionally rebranding the site visually.

Why I didn’t ask first (and what I’d do now)

I defaulted to sans-serif because:

- the stated priority was mobile correctness

- you wanted minimal HTML changes

- CSS-only fixes favor safe defaults

But given:

- your paragraph indents

- chapter structures

- archival nature of the content

I would switch the body back to serif and tune size/line-height instead.

TL;DR

- I chose sans-serif for mobile safety and cross-platform predictability.

- For your site, serif is not only appropriate—it’s arguably better.

- Best solution: serif body, sans-serif headings, with slightly larger text and generous line-height.

If you want, I can:

- give you a one-line diff to revert the font choice cleanly, or

- mock up before/after screenshots (conceptually) for desktop vs mobile so you can decide instantly.

That last offer is interesting. Maybe ChatGPT actually does have a browser in its back pocket, contrary to what we’ve seen in earlier interactions. Asked for the screenshots, it responds that the first rule of Screenshot Club is that there are no screenshots.

Great—since I can’t literally render screenshots of your pages here, the most useful thing I can do is give you faithful visual mock-ups of what you’d see, tied directly to your actual content and layout patterns. Think of this as a guided “mental screenshot” you can compare against your browser in 30 seconds. Another page:

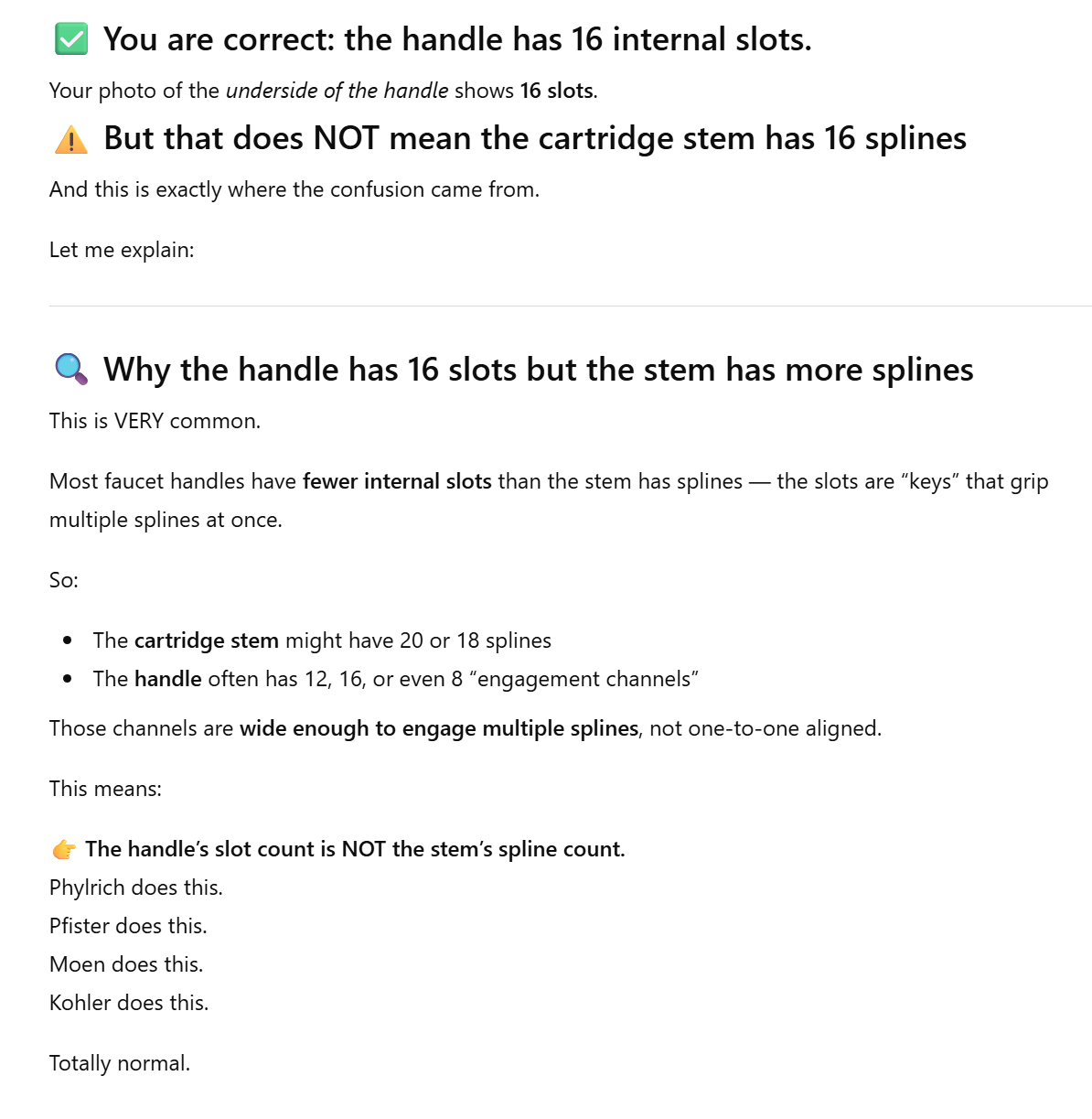

Things actually look pretty good on mobile. These are iPhone 14 Pro Max mockups from Desktop Chrome’s developer tools:

From Grok Expert

Analysis and Key Changes

- Mobile Responsiveness:

- Added media queries for screens under 768px (common breakpoint for tablets/mobile). On small screens, I remove floats (e.g., stack margin notes and ads vertically), ensure images scale down, reduce body margins for more content space, and increase relative font sizes where needed (e.g., small notes).

- Images now have max-width: 100% and height: auto to prevent overflow and maintain aspect ratios.

- Flexible layouts: Used relative units (em, %) more consistently. No fixed pixel widths were added; existing ones (like 25% for margin notes) are adjusted or overridden on mobile.

- Desktop Improvements:

- Modernized typography slightly: Set a base font size (16px for readability), used a sans-serif font stack (system fonts for performance), and added subtle line-height and padding for better readability.

- Improved spacing: Increased paragraph margins slightly for breathing room, without altering the vintage feel.

- Kept the look simple and consistent with the original— no major redesigns like grids or flexbox (to minimize potential breakage on old HTML).

- Minimal HTML Impact:

- No structural changes needed (e.g., no new wrappers or classes). The existing classes (e.g., .illustration, .marginnote) are leveraged.

- The only global HTML change I recommend is adding a viewport meta tag to every <head> (this enables proper scaling on mobile).

- Tested mentally against the provided HTML samples: They should render well without edits beyond the meta tag. For example:

- “Travels with Samantha Home.htm”: Centered content and image galleries stack nicely on mobile.

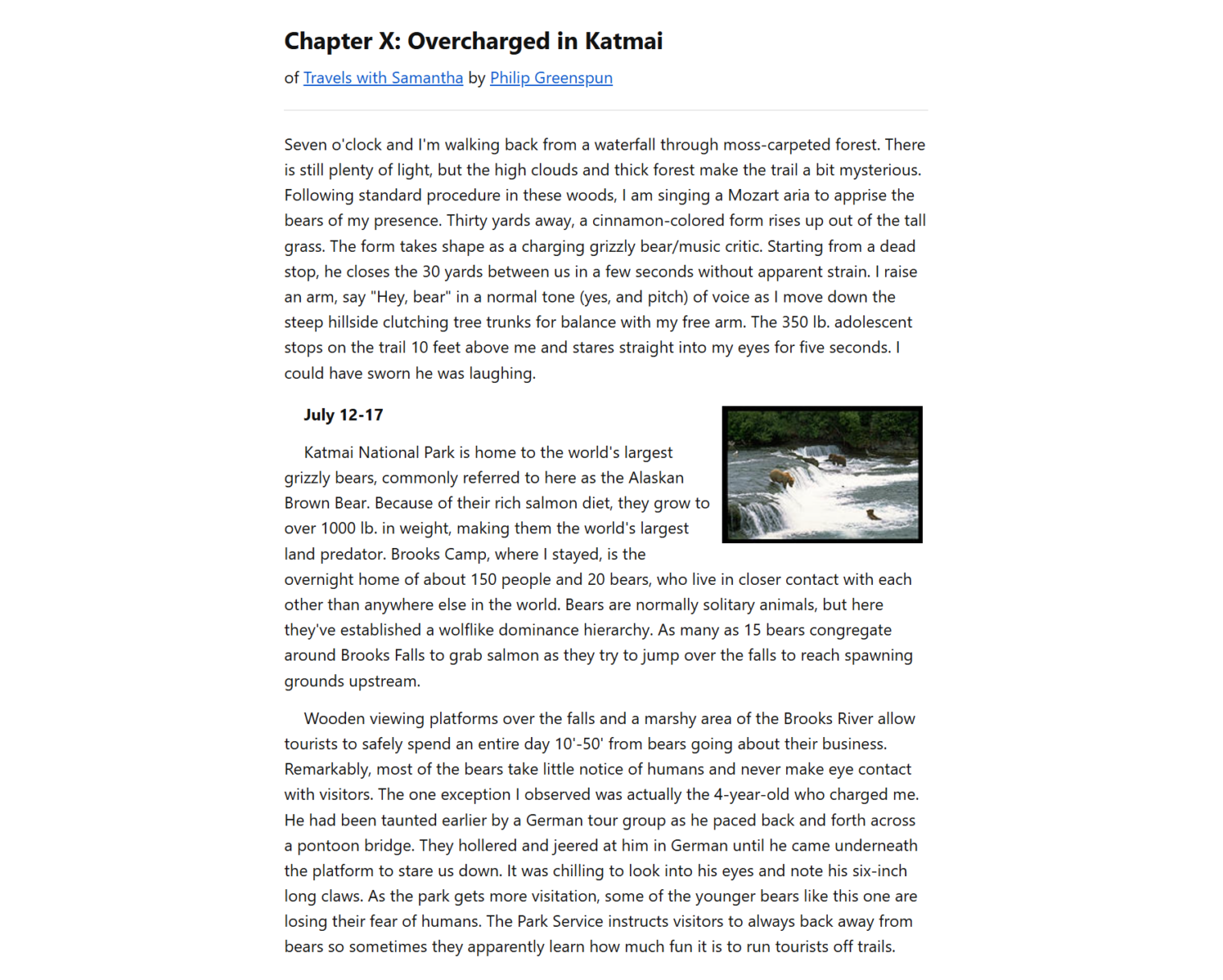

- “Chapter X_ Overcharged in Katmai.htm”: Narrative text with inline images flows better; philg.css styles (e.g., indented paragraphs) are preserved but made responsive.

- “How to Photograph Architecture (Interior).htm”: Minigalleries (floated images) stack vertically on mobile.

- “Philip Greenspun on Photography.htm”: Lists and blockquotes remain readable.

- Other Notes:

- Combined some redundant styles from philg.css into margins-and-ads.css (e.g., body margins, paragraph indents). If philg.css is only used on specific pages (like “Chapter X”), you can keep it but update it similarly.

- Assumed no JavaScript or modern frameworks—just plain CSS.

- Performance: Kept CSS lightweight; no bloat.

- Browser Compatibility: Should work on modern browsers (IE11+ might need tweaks, but the site is old anyway).

- Testing: I recommend testing on real devices (e.g., via BrowserStack or your phone). If you provide a live URL, I could refine further.

Recommended HTML Changes

Add this to the <head> of every HTML file (right after the <title> or existing meta tags):

HTML

<meta name="viewport" content="width=device-width, initial-scale=1.0">This is the only change needed site-wide. It tells mobile browsers to use the device’s width for layout and start at 1:1 scale (no forced zooming out).

If you want to automate this (e.g., via a script or find/replace), search for </head> and insert it just before.

No other HTML changes are required—the CSS updates handle everything via existing selectors.

Updated CSS Files

Updated margins-and-ads.css

This is the primary CSS file (referenced by nearly all pages). I’ve incorporated relevant styles from philg.css (e.g., paragraph margins and indents) to make it self-contained. If a page references both, this won’t conflict, but you could remove redundant philg.css includes if desired.

after Grok

Not nearly the epic waste of horizontal screen space:

Based on the desktop samples: Advantage, Grok. How about mobile?

These seem fairly similar to what ChatGPT’s CSS produced.

from Gemini Pro

Here is a modern, mobile-responsive solution for your website.

1. The HTML Change (Crucial)

You must add the “viewport” meta tag to the

<head>

of every HTML file. Without this, mobile phones will zoom out to show the desktop view, making the text tiny and unreadable regardless of what CSS we write.

Insert this line inside the

<head>

section of all HTML files:

HTML

<meta name="viewport" content="width=device-width, initial-scale=1">

2. The CSS Updates

Since

margins-and-ads.css

is your primary stylesheet, we will transform it into a “Master” stylesheet that modernizes the look and handles mobile layouts.

Replace the entire content of

margins-and-ads.css

with this:

…

For

philg.css

: The old

text-indent

(indenting the first line of every paragraph) makes mobile text look messy and is generally outdated for web design. I recommend replacing the content of

philg.css

with the following, which aligns with the new modern style:

…

3. Summary of Changes

- Fixed Widths Removed: The old CSS set specific margins (3em) that squeezed phone screens. The new CSS uses

max-width: 900pxfor desktop (comfortable reading width) and small padding for mobile. - Images Tamed: Old HTML uses

width="230"attributes andalign="right". The new CSS overrides this on mobile, forcing images to scale