Snowflake (SNOW) is valued at $62 billion and had 2020 revenue of $265 million with losses of $348 million (i.e., they lost more than 100 percent of revenue!). The company was at one time worth more than IBM (now at $120 billion).

How can a startup data warehousing company be worth a substantial fraction of Oracle’s $200 billion market cap? Oracle’s 2020 revenue (admittedly flat compared to 2019) was $39 billion with $10 billion in profit. Data warehousing is a small fraction of Oracle’s business; the company competes with SAP in the ERP market and sells its core RDBMS for transaction processing. Data warehousing is sometimes useful, but if a company’s Oracle systems were shut down the company wouldn’t be able to take orders, manufacture widgets, pay employees, pay vendors, etc. The actual operation of a business (which is what Oracle supports) has to be worth way more than sifting through data to learn that customers buy more alcohol after they’ve been locked down by state governors (what you can learn in a data warehouse).

Snowflake says that they’re doing something exciting layered on top of Amazon Web Services, but what if a lot of their customers are motivated by the fact that Snowflake is selling services to them at a loss? If Snowflake buys storage and computer from Amazon, then marks it down by 30 percent, plainly it is better to buy from Snowflake until and unless the party with investors’ money ends.

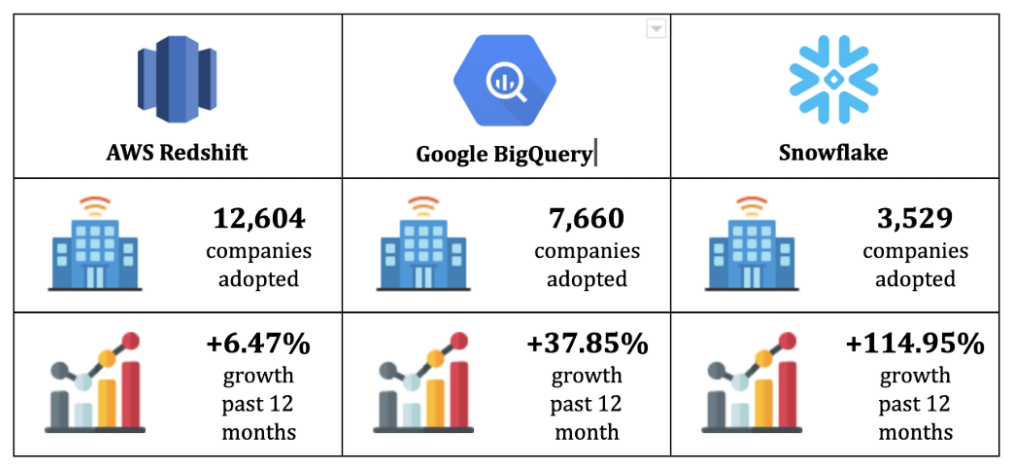

This guy liked Snowflake in 2018, but notes that it competes with a native Amazon offering: Redshift. The Gartner folks picked traditional data warehousing leader Teradata as superior to Snowflake in four out of four use cases. This mid-2020 comparison shows that AWS Redshift has substantially more customers, but Snowflake is growing rapidly:

The author does not come out strongly in favor of Brand A, G, or S:

Ultimately, in the world of cloud-based data warehouses, Redshift, BigQuery and Snowflake are similar in that they provide the scale and cost savings of a cloud solution. The main difference you will likely want to consider is the way that the services are billed, especially in terms of how this billing style will work out with your style of workflow. If you have very large data, but a spiky workload (i.e. you’re running lots of queries occasionally, with high idle time), BigQuery will probably be cheaper and easier for you. If you have a steadier, more continuous usage pattern when it comes to queries and the data you’re working with, it may be more cost-effective to go with Snowflake, since you’ll be able to cram more queries into the hours you’re paying for. Or if you have system engineers to tune the infrastructure according to your needs Redshift might just give you the flexibility to do so.

If “the main difference … is the way that the services are billed,” how can Snowflake be worth $60+ billion? Amazon and/or Google could simply change the way that they bill their services.

Readers: What am I missing? I hate to think that markets are wrong, but I can’t figure out how SNOW is worth $60 billion. In our current bubble, the average P/E ratio for the S&P 500 is 40 (15 is normal and Oracle is only at 17 right now). So SNOW would need $1.5 billion in annual profit to justify its current market cap. If the company settles in at Oracle’s fabulous 25 percent profitability, that would correspond to $6 billion in required revenue. Teradata (TDC), after 42 years in this business, has annual revenues of $1.83 billion, with profits of only $129 million. TDC’s market cap is $4.2 billion. If SNOW has not come up with new and better algorithms for analyzing data, how can they be worth more than the database warehousing businesses of IBM, Oracle, Teradata, Microsoft, Amazon, and Google combined?

Happy April Fools’ Day again and remember that nobody is more foolish than an investor in a bull market! (Also remember that nearly all of my investment instincts, including refraining from buying Bitcoin, have proved to be wrong!)

Related:

- my own simple explanation of classical dimensional data warehousing in a SQL database (see also The Data Warehouse Toolkit if you want to catch up on this industry)

I know maybe a click above nothing about investing, but I have heard it said that the market can remain irrational longer than I can remain solvent.

Google’s BigQuery was for a while a stunning deal, because they didn’t bill for

the compute time in queries, only for the amount of data ingested and output. So if you could stuff your compute-heavy program app into a BigQuery SQL-like program, you were essentially getting hundreds or potentially thousands of worker compute cycles for free. They closed that loophole eventually.

Buy VTI and forget about the rest. (not financial advice disclaimer).

I can’t figure it out either. Vertica sold to HP for $1B, and it also has better performance and price/performance than Snowflake. It has a cloud product that’s very similar.

The only differentiator is the “self-tuning”, but every database product claims that, until they don’t. It doesn’t support a wide mix of queries, because it doesn’t support multiple projections per table.

See also https://www.vertica.com/resource/cloud-database-performance-benchmark-vertica-in-eon-mode-amazon-redshift-and-unnamed-cloud-data-platform/ — the unnamed one is Snowflake.

#1) >100% revenue growth year to year and gross profit margin > 50%

#2) List of SaaS companies Buffet has invested in (“Snowflake”)

Technically competent people should never do investment analysis of tech companies. Who would have ever thought that inventing the blue screen of death would generate emperor level wealth and power.

Regarding “Blue screen of death” – I used to advise investing into MS 30 years ago. It clearly was killing competition on combination of pricing, features and accessibility. It’s real competition in business world on the desktop and workstation was OS/2 which was buggy version of Windows NT several years before Windows NT was released. However OS/2 engineers bet on expensive RAM while Windows NT design rightfully relied on ever cheaper RAM. After hip and hyped – up IBM MBA CEO randomly killed OS/2 businesses dropped it immediately.

SNOW may indeed follow MS model but MS had deep market penetration in tenth of millions of customers when it grew exponentially by Windows 3 release and very dumb competition in form of MBAs and visicalc CEO, you cannot say the same about Big Tech today. And SNOW market penetration is less then 5,000 business, next to nothing in the software world

Alan, how profit margin > 50% squares with “revenue of $265 million with losses of $348” Second implies no profit at all.

Alan: Warren Buffet himself says that he’s underperformed the S&P 500 in recent years (https://www.cnn.com/2019/02/25/investing/warren-buffett-sp-500-stocks ).

Also, Buffet did not buy in at $240/share (the current price). He paid $120/share (see https://stocknews.com/news/snow-brk-a-brk-b-goog-ko-why-did-warren-buffett-invest-in-snowflake/ , which also says “Similar to Google, Snowflake’s software will only get more powerful with more users on its platform, uploading more data, and running more analytics.” (how does Snowflake get more powerful if users upload more data? The theory is that companies will be happy to share their data with competitors and others? https://seekingalpha.com/article/4373917-berkshire-hathaways-big-bet-on-big-data-snowflakes-ipo describes an example of a bike-sharing company using a weather data set, but weather data are available to anyone via public sources))

I wonder if there is an agreement not to sell for a period of time. Otherwise we might hear that Berkshire Hathaway, which had been happy to buy at $120, was happy to sell at $240!

https://www.fool.com/investing/2021/01/28/where-will-snowflake-be-in-1-year/ says “Snowflake will likely remain the dominant data cloud company for the foreseeable future. Though it will probably not turn profitable over the next year, it should benefit from massive growth. However, the increases will not likely justify the stock’s triple-digit sales multiple. Moreover, the fact that average investors could not buy at Warren Buffett’s price probably means that they should not follow Berkshire’s example.”

Anonymous – gross profit margin is calculated using revenue and cogs, profit is calculated using revenue and total expenses.

Phil – your question was “what am I missing” – that is what I answered. If you had asked “is SNOW rationally priced”, the answer would have been no (and no stock is).

I think Google Cloud services has the most cutting edge technology for a bunch of massive parallel computing data chomping systems (e.g., Dataflow and BigQuery), and Dataflow can run either batched or streaming, but they are not very user friendly, and Google has little time and patience for hand holding the users. Like they will deprecate an API a year after they introduce it, because they can do that to their internal users of their in-house super-scalable systems with nobody complaining. It’s a whole different ball of wax to do that to an enterprise customer, who is used to APIs staying supported for 20 years.

But it doesn’t help to have the best tech if it’s not easy to use, so maybe some of these other services have invested a lot more in marketing to make it seem like their stuff is easier to use, even if it isn’t.

1. Storage is dirt cheap on AWS S3. Thus lots of enterprise data workflows are already using it (or in process to leverage it).

2. Since, Snowflake decoupled compute & storage. The customers only pay for the storage (which is very cheap). And for compute, only when they actually want to run some queries.

3. The warehouse is ‘elastic’ – in that, if you want faster queries, get larger instances & pay a bit more. If you want to reduce costs, maybe go with a smaller-sized engine, which would cost less.

Thus, the customer has flexibility in terms of costs.

And In my opinion, one of the core reasons for their success is that – they engineered this system very well, the thing ‘just works’.

(disclaimer: my sister works there .)

btw, If you thought snow was overpriced, what do you think of mongo: https://finance.yahoo.com/quote/MDB?p=MDB&.tsrc=fin-srch

while we are at it, worth looking at databricks as well. Valued at 28B https://databricks.com/company/newsroom/press-releases/databricks-raises-1-billion-series-g-investment-at-28-billion-valuation

they do ‘data lakes’ – query your data straight on top of S3.

As somebody who wrote about half of Snowflake’s execution engine, I could offer some more things:

1. Snwflake is the only offering handling semi-structured data at RDBMS speed because it internally coulmnarises it. Snowflake also supports tables with heterogenous schemas internally (the table scanner actually compiles extraction plan for every block!)

This allows users to replace conventional ETL pipelises with simple loading into Snowlake; that eliminates the need for sychronization between multiple groups in a typical customer org for any tiny schema update.

2. Snowflake by design has minimal number of knobs for controlling the query optimization. No need for DBAs anymore.

3.The big one: Snowflake is the only data warehouse offering which allows seamless data sharing between orgs. No more kludgey and notoriously unreliable data pipelies. Just give permissions, and your suppier or customer gets the view of the up-to-date data. This, together with multi-cloud capability, creates a powerful network effect: if a company has moved its data to SnowfIake, it makes it more attractive to its affiliates to do the same.

It’s a social network for data, and comparing it to isolated warehouses liIe Redshift or Teradata is comparing apples to oranges.

averros: Congratulations on building a big part of something worth $60 billion!

To your point #3, “The big one”, how many orgs want to share data? Is that really a $60 billion feature?

To your point #1, how tough would it be for competitors to match the capability you’ve described? Unless SNOW has a bunch of patents, can’t Amazon Redshift match this? They have approximately 60 billion reasons to try!

@philg: “averros: Congratulations on building a big part of something worth $60 billion!”

Congratulation averros and I hope that you retained a chip of $60,000,000,000.

I just want to note that “big part of engine” and “big part Snowflake system” is not the same thing. Engine is the thing that processes requests and pushes them through workflow, it is very important but the staff that it pushes is the main part of user functionality which too needs to be programmed. I am saying it as someone who built and worked on several commercial and private but nevertheless pushing through a lot of dough software engines and also built a lot of functionality.

Low Skilled Immigrant – you are absolutely correct that the bulk of code is not execution but query compilation & optimization, metadata handling, management frontends, security, etc, etc, etc. The exec engine has its own interesting challenges, though, as it has to be both performant AND absolutely reliable (loss or quiet user data corruption are absolutely unacceptable). My background in Russian enginering school was quite helpful in making this happen.

Philg – organizations absolutely want and need to share data. Without Snowflake this is real pain, since doing reliable and secure data pipeline and synchronization with reponsibility split between different companies is a very hard. There are even new business models startup companies are trying to do based solely on this new capability for easy cross-org data sharing.

This is real disruptor in government IT, supply chain management, and such. It also makes it easy for centerprise SaaS companies to cut corners on data access features (i.e. instead of writing code to provide this or that API for data access to their customers they can simply expose their internal tables with Snoflake providing access control and needed data access restrictions or mangling for PII and such).

Massive data sharing was already a thing when Simon Garfinkel wrote Database Nation. It’s 100x these days and will become even more prevalent in the future as companies will find new ways to exploit this easy to use capability.

@averros

Congratulations!

>>>It’s a social network for data, and comparing it to isolated warehouses liIe Redshift or Teradata is >>> comparing apples to oranges.

this is an interesting perspective. Also by sharing you mean, snowflake ‘hard-links’ the data when sharing?

Also, what’s your take on dremio & data lake engines like databricks in general?

(i mean, does snowflake sees them as threats)

I feel like shorting anything on the precipice of an inflationary burp is kind of a bad idea. I mean what’s a thousand bucks worth right now?

SuperMike: I agree with you in general that the $trillions in money-printing are generating inflation, at least for the stuff that people with money buy. But I think it is possible to make money if Snowflake underperforms the rest of the market, even if we go back to Jimmy Carter-era inflation. The money obtained from shorting Snowflake isn’t left in a checking account, but instead invested in the S&P 500. Absent transaction costs (which can be huge if a lot of other people want to short the same stock), this makes money so long as the S&P 500 does better (dividends plus appreciation) than Snowflake.

https://www.marketbeat.com/stocks/NYSE/SNOW/short-interest/ says that about 5 percent of Snowflake’s shares are held short right now. For Microsoft it is 0.67 percent (https://www.marketbeat.com/stocks/NASDAQ/MSFT/short-interest/ ). For Oracle it is 1.74 percent. https://www.marketbeat.com/stocks/NYSE/ORCL/short-interest/

Nice excerpt from your SQL book – this part alone is gold : “Data warehousing is useful when you don’t know what questions to ask.”

The link to Ralph Kimball’s book brought back some memories. I designed & built the start of a warehouse for a major Higher Ed institvte using this book in the nineties. My favorite warehouse story of that time was of a large retailer that found out from its warehouse that customers tended to buy more beer and toilet paper on Thursday evenings (who knows why!), so the stores put those items up front on Thursdays.

It is a superior product in the data warehouse space, far out performs Redshift and Oracle doesn’t _really_ have anything in the same category. Google Big Query is the only competitor.

Agree with this comment, it does just work. Easiest fastest most reliable.

https://philip.greenspun.com/blog/2021/04/01/short-snowflake/#comment-353245

Concerns:

1. their ability to stay competitive on their pricing when their suppliers (AWS, Azure, GCP) are also their competitors, but I suppose they are betting on being able to arbitrage across the 3 for low storage and compute consistently.

2. New competitor building the same thing, see http://www.firebolt.io

Fortunately my brain is much smoother than yours so my investment history is (marginally) better. Still I have avoided Snowflake, Bitcoin (well, after a brief fling in 2017), and GameStop. I am long Oracle, we’ll see how it goes.

Were you really wrong on Bitcoin? Suppose you had invested years ago, and parked your coins at a leading exchange like, say, Mt. Gox.

You’d still be better off with a dull index fund investment.

J: I probably would have lost the Post-It note with the seed phrase if I’d bought Bitcoin. I wouldn’t have needed Mt. Gox’s help to lose it all.